When organizations layer advanced generative AI models over uncurated legacy data architectures, deployments usually hit a brick wall. Teams build promising proofs of concept in isolated sandboxes, but moving those experiments into production exposes the messy reality of enterprise data, and the efforts end up in pilot purgatory. In many cases, the primary barrier isn't technical capability, but the absence of foundational digitalization: clean, governed data with a coherent semantic layer that AI models can leverage.

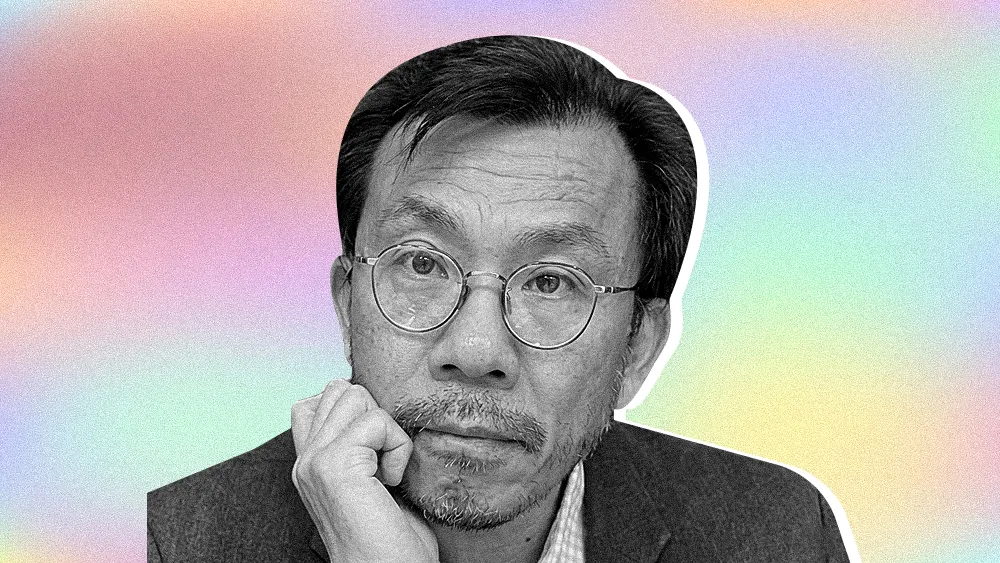

José Freitas has spent over 15 years navigating the friction between technological hype and architectural reality. Currently serving as a Lead Enterprise Architect at the International Air Transport Association (IATA), he specializes in scalable, secure architecture for highly regulated environments. His senior roles at Deloitte and IBM, spanning multiple industries and organizational maturity levels, give him a practitioner's view of what makes enterprise deployments succeed and what causes them to fail. "Your effort is about digitalization. Structuring your data, putting data on top, the definition of data objects, all the things that go before that. Because even if you need to run an AI model, you need to contextualize," said Freitas.

For many organizations, the unglamorous work of establishing data foundations such as governance, quality, and lineage remains a major stumbling block. In regulated sectors such as aviation and healthcare, many leaders view regulator-proof environments as a baseline requirement. In these settings, AI cannot simply be accurate; it must be auditable, explainable, and aligned with jurisdictional compliance and regulatory requirements. A hallucinating model doesn't just raise compliance concerns; in safety-critical contexts, unreliable outputs can carry genuine operational risk, demanding clear guardrails and human judgment. Yet some companies push ahead anyway, forcing AI into environments that lack basic data lineage and treating foundational architecture as a skippable step. Homegrown, bespoke systems often lack the inherent structure and metadata found in mature platforms, turning AI integration into a bottleneck that takes time and precision to untangle. When AI models are trained on poor-quality inputs, they produce unreliable and potentially dangerous outputs.

PDFs and pipe dreams: Freitas drew a stark contrast between highly structured factory floors and legacy environments still running on analog workflows. "If your digitalization is low, AI is not the place to be. There are so many workflows across industries that are based on a hand-signed PDF delivered in-store. There is no structured database that is actually gathering that information." He argued that layering AI on top of unresolved technical debt only masks deeper dysfunction. "With technical debt, it's just trying to sugarcoat a rusty vehicle. You need to understand that if you don't have the basics, you will always fail."

Those underlying architectural issues frequently multiply due to a cultural disconnect. Some executives have limited visibility into the state of underlying data and may assume enterprise systems operate like consumer tools such as ChatGPT, where curated data is already baked into the experience. On the IT side, teams can feel pressure to adopt new capabilities quickly, sometimes before a clear architecture governance framework is in place. To bridge that gap, enterprise architecture is well positioned to act as a translator between business goals and technical reality. Enterprise architecture is the connective tissue between business intent and technical execution, uniquely positioned to baseline the as-is landscape, expose gaps, and define a realistic transition roadmap before AI initiatives scale. Through business capability mapping, application rationalization moves beyond cost reduction and gains a strategic value, ensuring every technology investment aligns to a capability that delivers measurable business outcomes.

Overcoming that friction often requires organizational rewiring tied to EBIT. Deploying AI to mask accumulated technical and architectural debt or to compensate for weak UI/UX rarely addresses the root cause, and often compounds risk with every iteration.

Problem first: Freitas cautioned that IT teams' instinct to reach for new tools often overrides the discipline of listening to users' actual needs. "First of all, avoid solutioning. As enterprise architects, you need to listen to what people actually want." The problem compounds when teams build capabilities around assumptions rather than validated demand. "Don't try to invent solutions for problems that are not being asked, and this is paramount for architecture. People want to solve problems with AI that don't exist."

When teams quickly stitch AI onto legacy systems to solve problems that users may not have raised, operational workflows can spiral out of control. To prevent chaotic sprawl, some organizations are clarifying who actually owns the orchestration graph and establishing a governed orchestration layer to move from insight to action. Applying that discipline allows companies to reset strategy around orchestration and ROI before a broad rollout. Without centralized controls, deploying unauthorized agents can trigger a security crisis and bypass the vital work of embedding governance into the AI-native SDLC.

Jurassic tech: "Across industries, core applications are still running on unsupported technology stacks, without redundancy, and remain a widespread and critical risk. We are in 2026. This seems surreal, but this is still true." For Freitas, examples like that underline why organizations need to identify key systems, bring foundational data into managed platforms, and establish basic continuity controls before layering AI on top.

Shadow IT: Freitas said the proliferation of unsanctioned AI tools has become a daily governance challenge. "Someone is using Kimi, and then people get concerned; they don't know whether it's going through an approved channel or making ungoverned API calls directly to Moonshot AI's servers in China. This is an everyday occurrence." That is exactly the kind of scenario, he said, that centralized AI governance and SDLC guardrails are built to prevent.

Beyond operational headaches, the adoption of ungoverned AI often leads to steep, unexpected financial penalties. The envelope costs of failed proofs of concept can foreshadow a wider cost-management challenge driven by decentralized, credit-card-based token spending. To navigate those financial risks, a growing number of organizations are adopting frameworks for forecasting AI costs and implementing current-state FinOps tooling to regain control over distributed budgets and usage.

For Freitas, the path forward is about earning the right to pursue AI ambitions thoughtfully and responsibly. That means investing in data strategy before AI strategy and empowering enterprise architecture to enforce the governance that business and IT teams won't impose on themselves. Without that mandate, Freitas argued that organizations will keep cycling through expensive pilots that never reach production, bleeding budget on subscriptions and tokens, and exposing themselves to the same ungoverned sprawl that defined the early cloud era. "Enterprise architecture, when mandated and empowered, is what bridges the gap and holds the balance between business and technology. Skip it, and the foundation doesn't hold."

The views and opinions expressed are those of José Freitas and do not represent the official policy or position of any organization.

.svg)