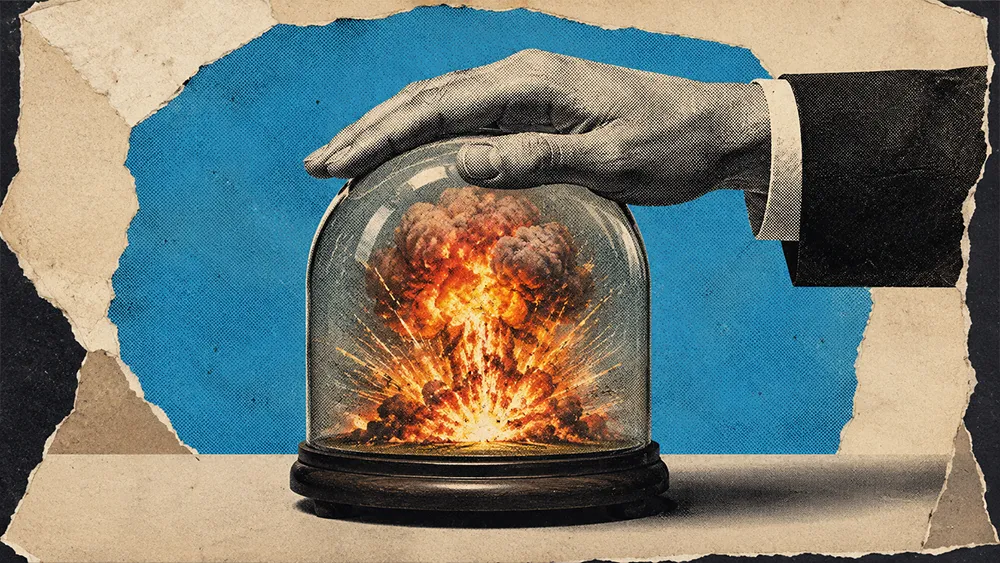

AI capabilities that were experimental 18 months ago are now cheap, widely available, and already being used offensively. The practical implication for enterprises is not that one model crossed a safety threshold. It is that the baseline capability curve rose across the board, and governance models built for a slower era are not keeping pace. Exposure now lives in the lag between capability, deployment, and control.

Hemil Deshmukh is Group Head of Data & AI at Wilson Group, an Australian diversified group where he leads AI strategy, platform architecture, and governance. His career spans more than two decades of data and analytics leadership at Coles, Australia Post, EnergyAustralia, and Deloitte, including programs in digital transformation, revenue assurance, and fraud detection. For Deshmukh, the AI governance conversation starts less with policy design and more with operational resilience.

"Resilience beats policy. You can't out-policy a rising baseline. What matters is an operating model that can absorb mistakes: fast patching, small blast radius, clear owners, rehearsed response paths," said Deshmukh.

The external threat changes internal design

Deshmukh argued that the rising AI capability curve should change how enterprises design and govern AI systems from the inside. The core assumption shifts: anything exposed will be probed.

"AI turns small weaknesses into repeatable, automated failure," Deshmukh said. "So you want safer defaults, fewer sharp edges, and fewer single points of failure." He emphasized that oversight has to move earlier in the lifecycle. "If the first serious conversation happens at deployment, you're already behind. Set decision rights up front: what data it can touch, what actions it can take, and who owns the outcome when it's wrong."

As baseline capability rises, time-to-exploit shrinks. Deshmukh said that boards should expect continuous assurance of control: ongoing testing, clear separation of environments, and the assumption that anything exposed will be probed. "We spend less time obsessing over any one model, and more time on control and ownership around the system."

Enterprise hallucination is a context problem

Deshmukh drew a direct line between the reporting-versus-explanation gap in enterprise data stacks and the AI governance failures organizations now face. Most platforms were built to store and move data, not to preserve meaning.

"Definitions live in documents, business rules live in code, and exceptions live in people's heads. That was manageable when humans did the reasoning. With AI in the loop, it isn't." When an AI system hallucinates in an enterprise, he argued, it is often filling in gaps the organization never made explicit: ambiguous entities, inconsistent definitions, missing relationships.

Closing this gap requires platform-level changes. Deshmukh described three requirements: shared definitions that capture what a customer, a risk, or an approval actually means, not just database schemas; curated context that is scoped and versioned rather than assembled from fragments; and explicit decision boundaries that constrain what the system can infer. "If context isn't controlled, enterprises don't get assurance of control," he said. "They get after-the-fact forensics."

Governance fails the same way projects do

Deshmukh identified a pattern: AI governance programs fail for the same reason AI projects do. Teams skip the uncomfortable conversation about what they are actually trying to fix and replace it with process.

"You can spot it when the conversation is about models and process, not outcomes and control. What's the downside if this is wrong? Who owns it? How fast would we detect it? What's the containment plan?" Without those answers, oversight fragments. Central policy, local delivery, and siloed risk functions fail to align, producing slow decisions in good times and confusion in bad times.

The teams that get this right focus oversight on high-consequence decisions, not technology. Central teams set principles and minimum controls. Business owners remain accountable for outcomes with clear escalation paths. Monitoring and stop mechanisms are built into normal operating procedures, not added as a last-stage sign-off. "Good AI oversight is boring on purpose," Deshmukh said. "Explicit ownership, sensible controls, fast recovery."

The CIO pressure test

For CIOs looking to pressure-test their governance posture, Deshmukh offered four questions. Pick one high-stakes decision and run a failure scenario: could you explain it within 24 hours and name the owner? Inventory the decisions AI influences, not the models deployed, because frequency and consequence define where controls belong. Test authority to intervene: if you had to stop or roll back an AI-driven decision right now, who can do it, and how quickly? And check decision visibility: can you replay what information was used, what constraints applied, and why the system acted?

"If you can answer those four questions, you have real control," Deshmukh concluded. "If you can't, you don't have an AI problem. You have an ownership problem."

.svg)