Enterprise AI still has a pilot problem. Many organizations can demonstrate what generative and agentic systems are capable of, yet struggle to convert those proofs of concept into governed, repeatable systems embedded in day-to-day operations. In regulated industries, the gap is even sharper: production requires traceability, human accountability, and alignment to compliance frameworks that pilots were never designed to satisfy. Closing that gap requires more than technical refinement. It requires an operating model that treats AI as an enterprise capability, not a sequence of isolated experiments.

At one of the world's largest financial institutions, that shift is already underway. Hardik Sanchawat is the Vice President of AI Products, Strategy & Applied Research at Citi, where he leads enterprise AI initiatives spanning generative, agentic, and hybrid AI systems. With more than 13 years of experience across roles at Accenture and IBM, and a co-inventor on an organization-filed patent application, Hardik bridges AI product strategy with hands-on build work, particularly around LLM orchestration and RAG architecture. At Citi, he focuses on moving generative and agentic AI from experimentation into production, where governance, risk management, and human accountability take priority. He described an approach to scaling AI in banking that starts with the operating model, not the model itself.

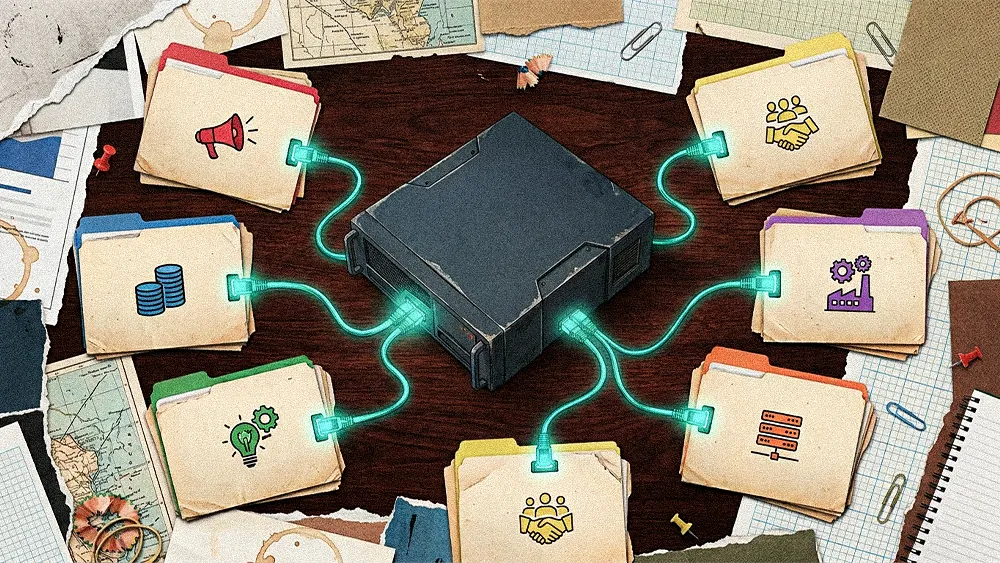

In Hardik's view, scale depends on getting the operating model right. "If everything is decentralized, you get fragmented efforts, duplicated work, and uneven control. If everything is centralized, you risk slowing down innovation and drifting away from real business needs," he said. "The most effective model is usually a governed platform with federated execution." In practice, that means the central AI platform owns the enterprise rails—security evaluation, model access, policy controls, and observability—while business units apply those shared capabilities to domain-specific workflows in areas such as risk reporting, operations, and customer service.

The architecture matters, but Hardik emphasized that the starting point matters just as much. When asked what advice he would offer leaders navigating this transition, he pointed to discipline over breadth.

From demo to deployment: "Start with a small number of high-value workflows where AI can materially improve productivity, service quality, decision making, or risk reduction," he said. "Build those on a reusable platform foundation rather than disconnected point solutions, and make governance, measurement, and business ownership part of the design from the beginning, not something added later." That approach reflects a broader pattern he sees across successful deployments: organizations that scale are not running the most experiments, but the ones turning AI into a trusted enterprise capability that is repeatable, governed, and clearly linked to business value.

Choosing where to deploy AI is only half the challenge. Equally critical is how organizations measure whether those deployments are delivering real workflow improvements or simply creating activity.

Counting the right beans: "One of the biggest missteps in enterprise AI is confusing activity with impact," Hardik said. Counting pilot deployments, active users, or prompt volume may satisfy early reporting needs, but those metrics rarely reflect what leadership actually needs to know. The more important question is whether AI is driving measurable outcomes tied to real business performance.

Passing the real-world test: To illustrate the distinction, Hardik pointed to a common example: an AI assistant that helps a service team draft responses faster. "The real value question is whether it also improves handling time, resolution quality, and customer outcomes without creating more rework or risk downstream," he said. In his framing, the test is not whether people use the AI, but whether the business performs better because of it.

Even when the right metrics are in place, the threshold for production in regulated industries extends well beyond performance. The bar changes when a knowledge assistant evolves into a compliance copilot supporting policy interpretation, and changes again when that system begins interacting with live workflows.

A higher bar: "If an AI copilot is being used for compliance review or policy interpretation, the key question is not only whether it produces a good summary," Hardik said. "The real question is whether the process is traceable, whether a human remains accountable, and whether there is a clear fallback path if the output is incomplete or incorrect." In regulated environments, those requirements must be satisfied before an AI-assisted workflow reaches production: the process must be traceable, accountability must be clear, and there must be a secure fallback path if outputs are incomplete or incorrect.

From assistance to action: The challenge becomes even more complex with agentic AI. "Systems are no longer just generating content. They may be invoking tools, coordinating actions, or influencing live workflows," Hardik explained. As agents begin triggering downstream tasks, it is no longer enough to ask whether an answer is correct. The more important question is whether the action is appropriate, authorized, bounded, and reversible.

That shift is reshaping how enterprises think about defensible AI as systems move from content generation toward execution. Once an assistant can route tickets, trigger tasks, or interact with downstream systems, traditional guardrails focused only on output quality or hallucination risk are no longer sufficient. Enterprises instead need deliberate permissions, approval boundaries, logging, monitoring, and escalation paths to support responsible adoption of agentic workflows.

The trajectory points toward bounded autonomy within a well-governed operating model, where the strength of the controls matters as much as the strength of the capability. “In regulated environments, capability matters, but controllability is what earns trust,” he concluded.

.svg)