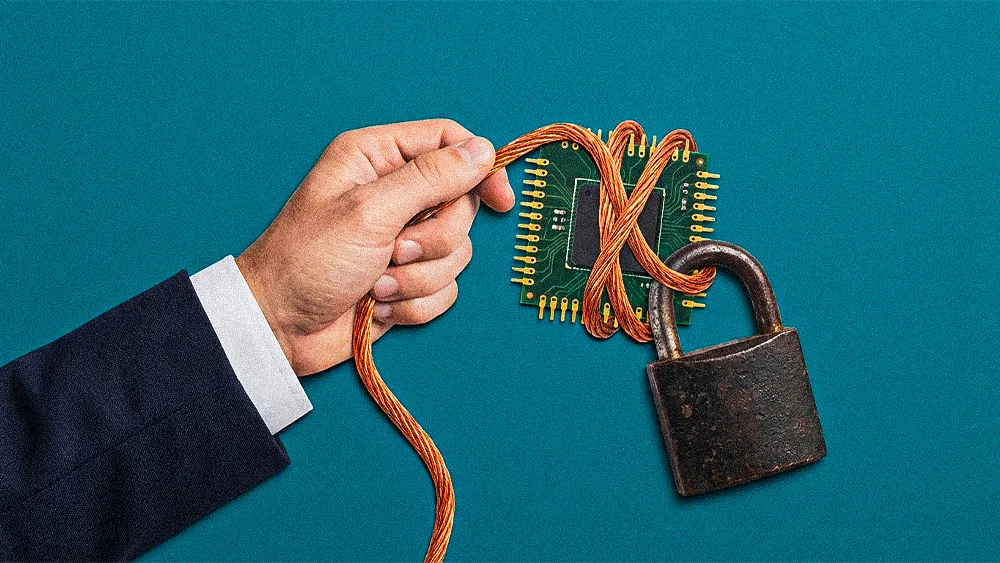

The companies that sprinted fastest into AI are now pausing to ask what it truly delivered. After a surge of adoption fueled more by competitive urgency than strategic intent, a recalibration is taking hold. Leaders are scrutinizing their AI portfolios and discovering that many deployments lack clear, measurable return. For security executives, the implications run deeper. AI layers new forms of risk onto environments where governance, ownership, and core controls were never fully matured, forcing long deferred accountability conversations to the surface.

Ricardo Bastos is a Cybersecurity Engineering Manager at TELUS. A CISSP, CISM, and CCSP-certified security professional with over two decades of IT experience, Bastos previously led NIST CSF assessments for critical infrastructure clients at EY Canada and managed global ransomware recovery across 5,000 assets in seven countries at Sierra Wireless. His career has centered on building security programs aligned to governance frameworks that translate technical risk into executive decision-making.

"Security risks are business risks. If we're not at the table understanding revenue streams and critical processes, we can't build controls that truly protect the business," Bastos said. That conviction shaped his view that the current AI correction is not a technology problem. It is a strategy and accountability problem that predates generative AI entirely and now grows faster with every new deployment.

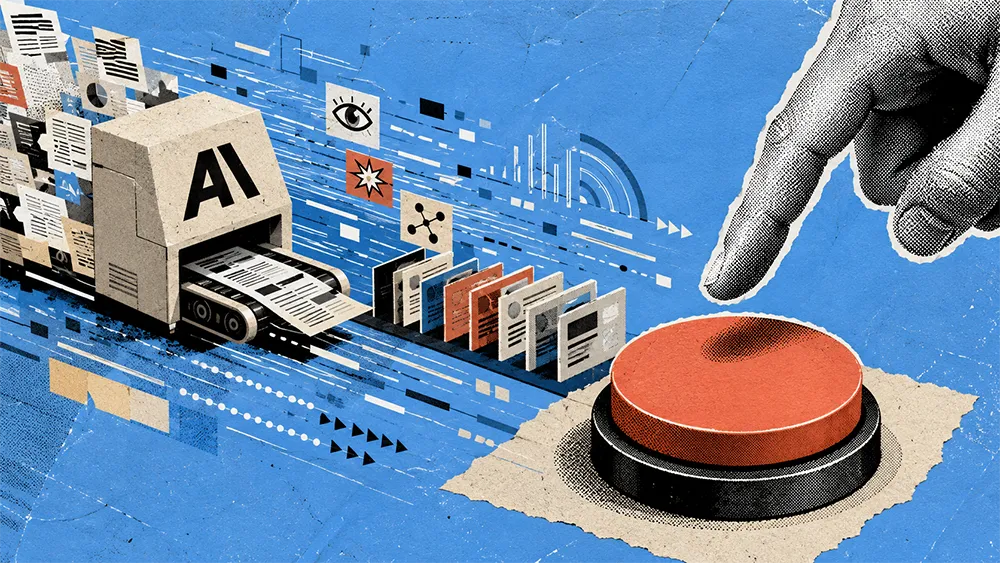

Correction, not collapse: Bastos described a market recalibrating after years of unstructured adoption. "Almost every company jumped into AI, but without clear expectations or a framework on return on investment," he said. "Now I see a correction. Companies are trying to study whether they are actually gaining something from AI, or if it is just something they say they have but don't know how to measure." That correction is healthy, he argued, but only if it leads to real accountability for outcomes rather than another round of rebranded pilots.

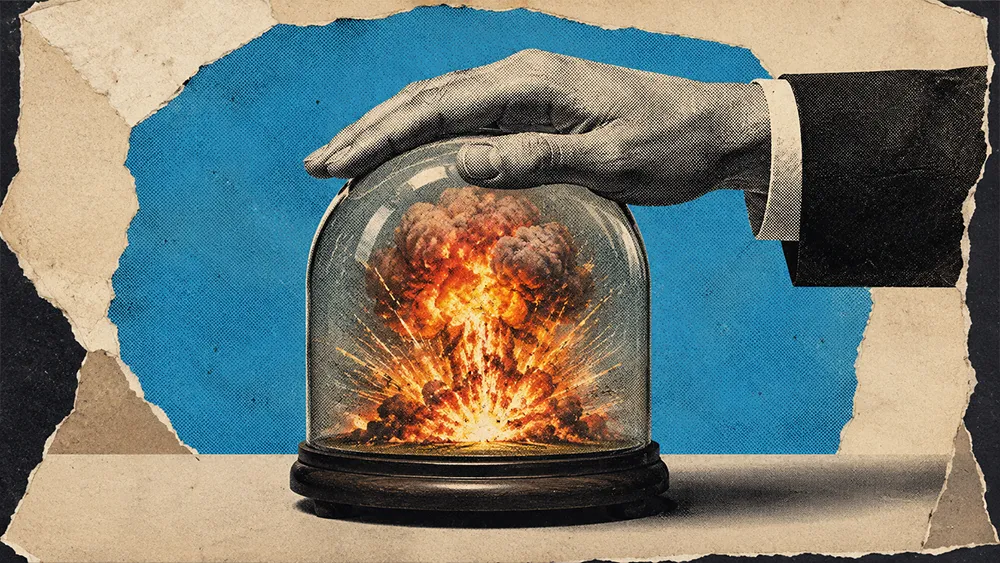

Foundations first: The deeper issue is that AI amplifies structural weaknesses that were never resolved. "AI doesn't fix weak foundations. If you don't have clear ownership, accountability, and defined processes, AI just makes the existing gaps exponentially bigger," Bastos warned. He pointed to agentic identities as a concrete example. "We've been working with identity management for maybe twenty years, and now we need to handle agentic identities, which is a completely different game. Who owns the agents? Is it the developer? Security? GRC? If you don't have the basics well defined, how do you manage this completely different reality?"

.svg)