AI agents are starting to convert customers more effectively than many traditional marketing channels, reshaping how brands are discovered and chosen. As large language models take on a frontline role in influencing purchase decisions, generative engine optimization is moving into the C-suite, with CIOs expected to treat autonomous agents as governed infrastructure, complete with clear ownership, controls, and risk oversight.

Helping organizations manage the shift is Pranav Kumar, Sr. Director of Digital, Data, & AI at global consulting and technology services company Capgemini. With a career spanning senior roles at firms like Adobe and PwC, Kumar is skilled at leveraging generative AI to craft cutting-edge conversational experiences. In his view, enterprises benefit from reframing GEO.

"Generative engine optimization isn’t a marketing upgrade. It’s a leadership test of whether your enterprise can orchestrate AI agents safely, strategically, and at scale." He said it's no longer about mastering a single algorithm, but about enforcing a consistent narrative across a fragmented environment of interacting agents.

The new brand guardians: In this context, the CIO's role is changing to that of co-custodian of brand authority alongside the CMO. "As a CIO, you are responsible for the brand as well," Kumar said. "So how do you go beyond the standard Google to drive those zero-click searches while promoting better governance across the brand when you interact with the end customer?"

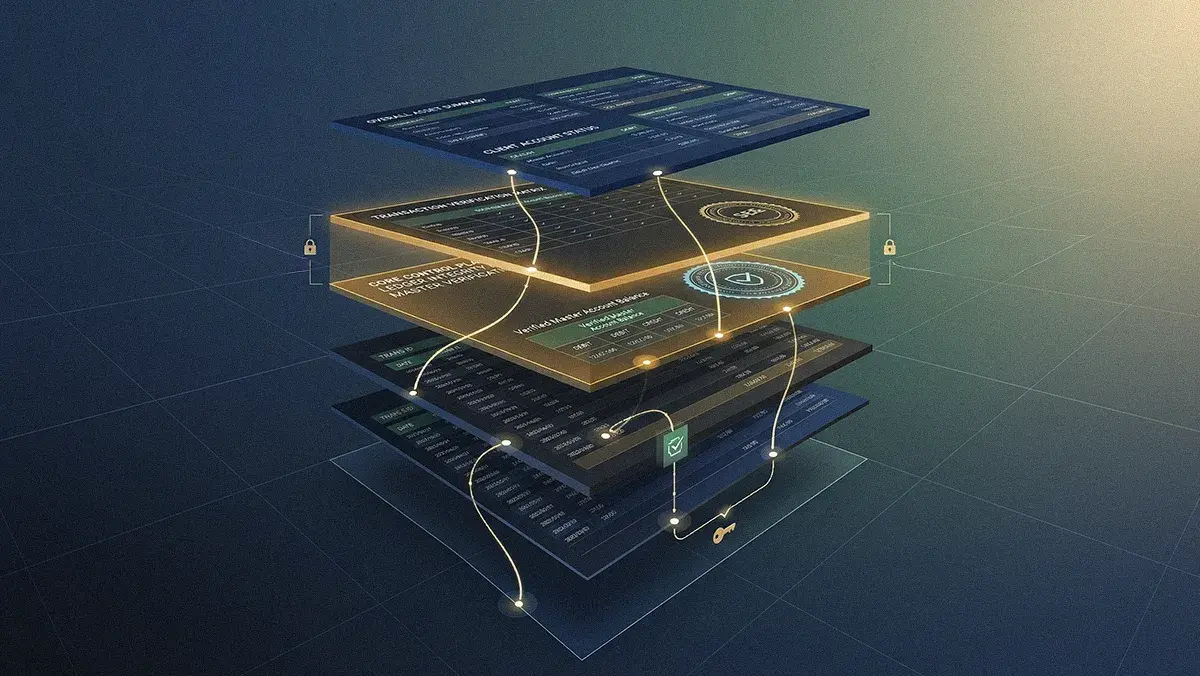

Governance built in: As agents begin communicating through emerging protocols, Kumar noted, the risk of unmonitored outputs grows. Effective oversight requires embedding brand compliance, bias filtering, and safety directly into the enterprise tech stack, from content management systems and Git integrations to the recommendation engines themselves. "It's essential to govern GEO as an integrated workflow, using centralized platforms to track metrics and connect them to enterprise-wide observability and guardrails."

.svg)