Enterprise AI is revealing a problem that has nothing to do with model quality. Across industries, the systems breaking down are not the ones running the weakest models. They are the ones where AI has outpaced the systems meant to govern it. In regulated environments like financial services, the stakes are higher still: a workflow that cannot produce an audit trail is not just inefficient, it is a liability. For organizations moving toward outcome-focused workflows, closing that gap requires the same discipline engineers apply to software delivery: a control layer built for governance, and an orchestration layer built for execution, each with a different mandate.

Bijit Ghosh is tackling this problem on the front lines. As Managing Director and Global Head of Architecture & Engineering for AI/ML at Wells Fargo, he helps shape enterprise AI governance in one of the most heavily regulated financial environments. A Global AI-100 Award recipient, he previously led legacy modernization and cloud-native payments platforms at BNY Mellon processing more than $1 trillion per day. His solution for moving from scattered pilots to reliable AI, built on his earlier work on AI context engineering at Deutsche Bank, is a strict two-layer model: a control plane as the source of truth, and orchestration as the source of action.

"AI fails because the workflows are brittle and decisions are not traceable. This is where the orchestration layer unlocks," said Ghosh. "It becomes the execution brain for the system." At enterprise scale, that failure is not hypothetical. When multi-agent coordination breaks, costs and latency spiral, and the workflow cannot self-correct. The orchestration layer holds those moving parts together, coordinating routing across models, tools, MCP connections, and memory systems in a single coherent execution path.

Before that separation can do any work, two things have to be defined: what the control plane is responsible for, and what it is not. Ghosh noted that in regulated environments, the control plane should be defined before any agents are wired into production workflows.

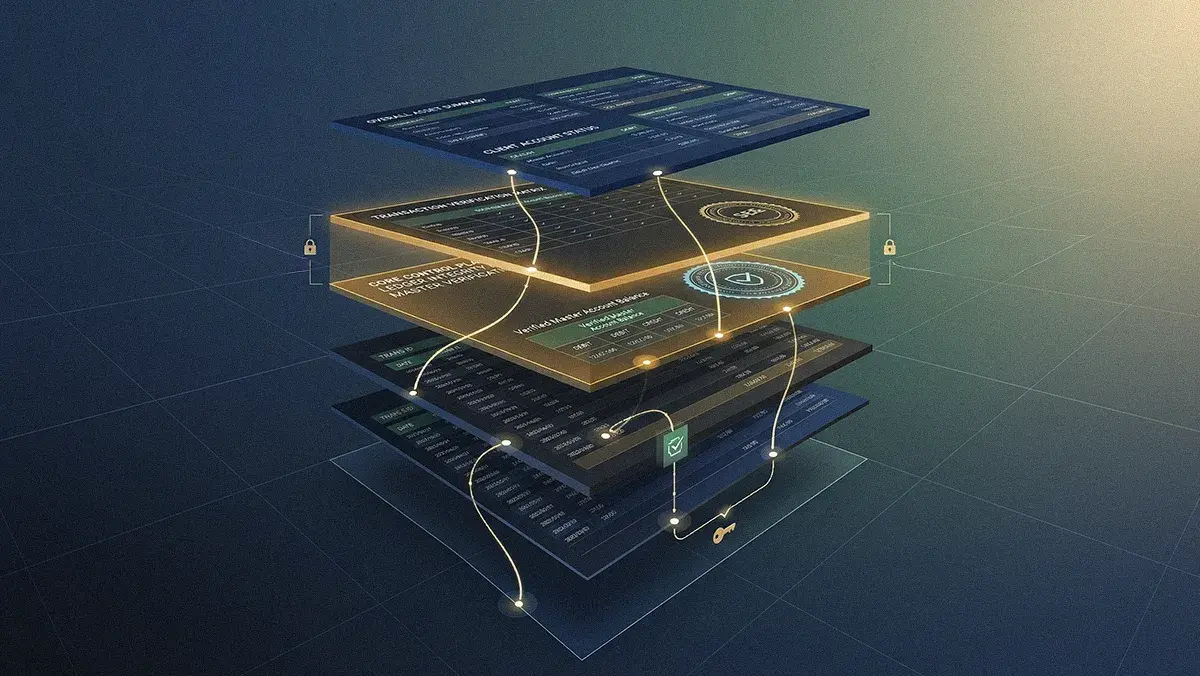

Brains and brawn: "The mental model that I see is: the control plane is acting as a source of truth, and the orchestration is a source of action," Ghosh explained. The distinction matters because each layer has a different job. The control plane holds the stable, auditable record: policies, identities, data contracts, model registry, lineage, and decision logs. Orchestration consumes that record and acts on it, routing tasks, scaling execution, and adapting as conditions change.

Rules of engagement: "Define what control really looks like before you start the orchestration: where decisions are allowed to be made, what data needs to be accessed, what must be logged, what must be auditable, and where the human-in-the-loop should be mandatory," Ghosh said. For his team, that translates into a control plane built around policy-as-code, identity and role definitions, model registry and lineage, and data contracts governing how data flows into every workflow. The decision trace is a complete, immutable log of every agent action, and it is what makes the whole system auditable when regulators come looking.

With the control plane established, orchestration takes over as the execution layer. It handles the task routing and coordination of multi-agent systems that sit on top of those rules, adapting as latency, cost, or workload change. In banking, those workflows span a surprising number of steps. The central design question is not which framework to use, but whether the truth and action layers stay distinct.

The 10-hop headache: "In a complex workflow, especially on our side in payments or lending systems, we have more than 10 different hops. Those are complex workflows, and those are deterministic and at the same time non-deterministic, from action planning to execution to validations," Ghosh described. That combination of long workflow chains with steps that can behave differently on each run is what breaks naive agent frameworks at scale. That is precisely why keeping the control and execution layers separate is not optional. It is the design principle that makes complex, multi-hop workflows traceable at every step.

Crossing the streams: The power comes from letting those layers talk to each other without collapsing them into a single system. "This separation is very critical," said Ghosh. "If you mix them, there will be logical drift from prompt to context, there will be auditable breaks, and policy becomes too implicit for enterprise adoption."

Ghosh's answer to that architectural risk is organizational: a hub-and-spoke model where the platform team holds the center. That central team owns the control plane and orchestration primitives, builds and runs the agent framework and workflow engine, and manages the underlying GPU infrastructure. Lines of business sit at the spokes, with their own data governance and AI/ML teams building agents, models, and evaluation frameworks on top. Across both, the control plane enforces shared governance structures while each line of business owns its outcomes. The question that follows is how to know whether that model is actually delivering, and Ghosh tracked that answer at every layer.

FinOps for bots: The measurement framework starts at the top and works down. For Ghosh, the top tier is business impact: revenue uplift, cost per process, fraud and error reduction, and customer experience tracked through NPS, resolution time, and SLAs. Beneath that sits operational efficiency: straight-through processing gains measured against two years prior, and FTE hours recovered through automation. As token-based pricing and GPU costs climb, cost measurement has to go deeper, down to the workflow and agent level. AI FinOps is simply the new bottom line. "The new element we're looking at from the AI economics side is token efficiency, tracking how many tokens are becoming useful versus the total tokens," said Ghosh. "As the GPU and compute become more expensive, we are also looking at the compute utilization side. We need to make sure that every cluster and compute is targeted enough to consume 70 to 90% of it."

The speed limit: Governance speed is itself a metric. "We are tracking the time to approve new use cases so that the LOBs have confidence in us. We want to accelerate governance so it is not slowing things down," he said. The operational layer borrows directly from SRE discipline. Ghosh looked at end-to-end workflow success rates across multi-agent runs, fallback trigger frequency, and exception recovery rates. The goal is to reduce multi-agent coordination inefficiencies in the same way SRE teams target incident rates in traditional systems. That operational discipline points to a larger strategic question, one that every enterprise building at this scale eventually faces.

The architectural clarity Ghosh described forces a practical question for every enterprise: what to build and what to buy. His answer turns on the distinction between proprietary and commoditized. The control plane belongs in-house. Ghosh was direct: this is the source of truth layer, and it must be rock solid, internally built, and hardened against the institution's evolving risk appetite, regulatory obligations, and operating model. It houses policy, identity, decision traces, model lineage, and runtime guardrails. That is the proprietary layer that regulators and auditors will lean on when something goes wrong.

Orchestration is different. As agent frameworks multiply and the ecosystem matures, platform teams are better served treating workflow engines, memory systems, and API and MCP layers as interchangeable components than building them from scratch. "When we are going from 10 workflow engines to 1 million workflow engines, or from one agent framework to 10 different agent frameworks that are interacting with API layers, MCP layers, and observability tools, those are very commodity building blocks," he said.

For technology leaders navigating this transition, the evidence is consistent: governance and execution must be architected separately from day one, or the speed of agentic AI will outrun the controls meant to govern it. The teams getting this right are not the ones with the most sophisticated models. They are the ones that built the plumbing first. "From the CIO and CTO lens, build your control plane like a bank builds its ledger," Ghosh concluded. "Most importantly, it should be precise, it should be immutable, it should be trusted, and leverage your orchestration like infrastructure. The control plane is the root, and orchestration is more like the engine."

.svg)