AI has accelerated what developers can produce, but it has not accelerated how quickly organizations can ship. The gap is widening not because the tools are failing, but because the systems surrounding them were not built for this speed. Inflexible workflows, fragmented data, and business models still calibrated to hours logged rather than outcomes generated are now the primary constraint on what AI adoption roadmaps can deliver.

Sam Ferrise is the Chief Technology Officer at Trinetix, a digital transformation consultancy that builds AI-native, cloud-powered systems for Fortune 100 enterprises. Over more than two decades, he has led global engineering teams through cloud-native modernization, real-time data platform buildouts, and AI-enabled delivery across industries including logistics and waste management. He argued that for most organizations, the coding bottleneck has already moved, and that where leaders choose to look for the answer will determine whether AI investment translates into shipped product or stalls in the process layer.

"The way we traditionally measure developer productivity is velocity, but with AI, that breaks down. We should be measuring the time from business intent to working software, because that's where value is actually created," said Ferrise. The industry has not yet standardized how to track that span, and most organizations lack the tooling to do it with any precision. He described the measurement challenge as having two sides: intent-to-delivery on one end, and the token cost required to build that software on the other. His teams track both manually, and said the gaps they find almost always trace back to the same organizational blockers.

Older monolithic architectures, including AS/400 systems and decades-old codebases, have historically made rethinking the IT process feel like a complete rebuild or nothing at all. Ferrise's teams used AI tools including Driver AI, GitNexus, and Claude Code, to build context across millions of lines of code, mapping modernization targets in a fraction of the time it would previously have taken. In recent logistics and waste management engagements, timelines that had been estimated at 12 to 24 months compressed to roughly five or six.

Piecemeal makes perfect: "We found out fairly quickly, within a couple of weeks, that we actually don't need to rebuild the whole thing. We can start piecemealing and redesigning the architecture with minimal impacts to the business," said Ferrise. The speed came from AI's ability to parse the existing codebase at scale, surfacing what could be preserved and what needed redesign before a single line was rewritten.

Even a perfectly mapped architecture stalls when the workflows around it stay broken. The pattern shows up clearly in procurement: leaders integrating AI across enterprise systems like Salesforce and ServiceNow often run into workflows that the organization has no appetite to change. In environments with highly regulated legal structures, the instinct is to preserve existing workflows and insert AI between the steps rather than eliminate them entirely.

Frankenstein's workflow: "Bolting AI onto an existing process is the wrong approach. If you don't redesign the process, you will not get the efficiency gains that you're expecting," said Ferrise. When the client pushed back, Ferrise said his team declined the engagement entirely rather than deliver an integration that wouldn't move the needle.

C-suite or bust: Redesigning rather than augmenting requires someone with the authority to make that call. "If you don't have enough executive buy-in and you're not willing to rethink what you're doing, it's not worth the conversation. You simply won't get the ROI you expect," said Ferrise. He said the conversations that stall at the technology level are almost always missing a decision-maker willing to question the process itself.

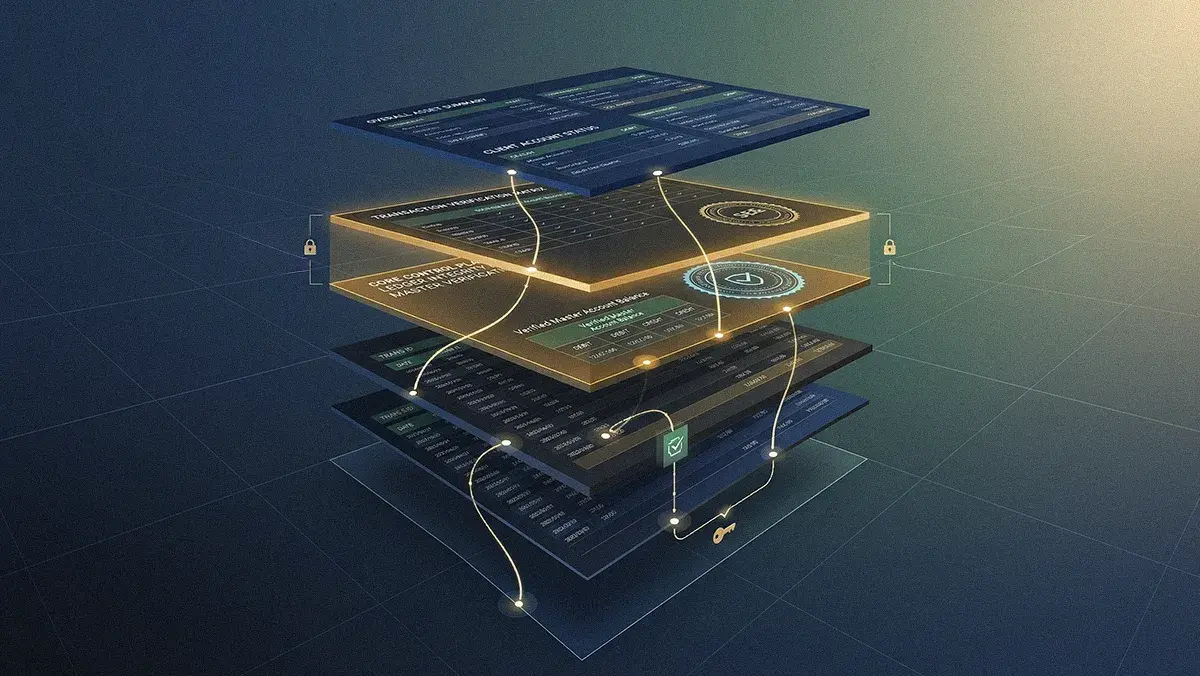

Process rigidity isn't the only place AI pilots stall. Teams that invest in small-scale pilots often find them impossible to scale because the backbone systems underneath lack clear, accessible data policies. To avoid surprises at scale, Ferrise suggested treating data hygiene as a precondition, not an afterthought, likening unmanaged data to organizational debt that compounds risk and cost the longer it sits ignored.

The debt collector: "Having policies around who can access what information is just good hygiene from a technology management perspective. The cost of going back and doing it later is exponentially higher," said Ferrise. "Bad news doesn't get better with time. Just go take care of it." He noted that the calculus holds regardless of where an organization's data stands: the cost of remediation only grows, and deferring it never makes the problem smaller.

Token cost is the other side of the measurement challenge Ferrise described, and unlike intent-to-delivery, it arrives on the P&L whether or not the software ships. The cost structure of large-scale AI is not yet well understood at the organizational level. Highly capable models like Anthropic's Opus 4 consume tokens significantly faster than more cost-effective alternatives such as Sonnet, and the difference compounds at team scale. At small pilot size, the exposure is manageable. Past a few hundred engineers, it is not.

The meter is running: "You can burn tokens very quickly without delivering any real value, and it can get very, very expensive if you're not careful," said Ferrise. His teams track token usage per developer, per feature, and per sprint, not because the methodology is mature, but because without it, budgets move faster than anyone notices.

The freemium hangover: "You get into teams of a couple of hundred engineers and all of a sudden the cost becomes real because you're on a different plan. You're not on what I call the subsidized plan," said Ferrise. "You're paying the real cost and actually contributing to their profit." He pointed out that LLM providers often absorb the true operational cost for smaller teams, making the economics invisible until the organization is large enough to matter to the provider's bottom line.

Those economics are already reshaping how Ferrise's own firm charges for its work. Trinetix moved away from time-and-materials billing as token costs made the old model unworkable. "AI changes business models because we used to think in terms of time and materials. Now we have to think in terms of output and value," said Ferrise. He noted that the shift is not unique to consulting: as AI embeds deeper into business products, the same pressure will reach organizations across industries.

That pressure will also reshape the engineering function itself. Contrary to predictions that AI would reduce the need for developers, Ferrise described the opposite dynamic taking hold. "You can't just let it run free. The cost is one thing, quality is another, and intent is another. For the foreseeable future, engineers are going to become more valuable than we previously thought," he concluded.

.svg)