The views and opinions expressed are those of Siva Paramasamy and do not represent the official policy or position of any organization.

Enterprise AI has left the sandbox and entered production, bringing with it a level of unpredictability that traditional compliance models were never designed to handle. As models drift, outputs vary, and agents act across real business workflows, governance is being pulled out of policy decks and into execution. What now defines AI maturity is whether controls are built directly into model lifecycles, agent development, and delivery pipelines, turning governance into a daily operational function rather than a periodic check.

Siva Paramasamy is the Global Head of Developer Experience Platform Engineering at Wells Fargo, leading enterprise GenAI and platform engineering initiatives inside one of the world’s most regulated environments. A C-level technology executive with deep engineering and enterprise architecture roots, his career spans large-scale platforms at Sprint through to patented, AI-driven systems and multi-year transformations. That end-to-end experience informs his focus on governance that works in production, not just on paper.

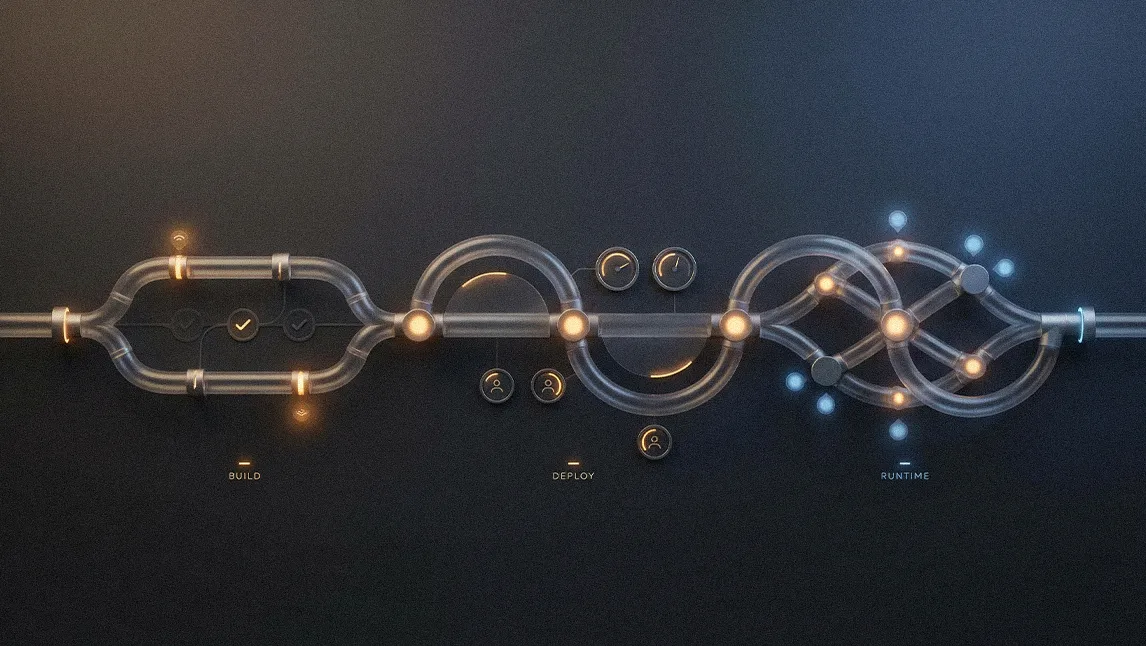

"There are two priorities. The first is the data you use to build context or tune the model, because data governance and data lineage are the foundation. The second is investing in the platform itself, your SDLC and DevOps toolchain, so you can govern agents, models, and prompts and monitor drift at build, deploy, and run time," said Paramasamy. Together, those priorities define what operational AI governance actually looks like once models and agents are embedded into everyday delivery workflows.

Low risk, high drift: Paramasamy framed the push toward hands-on governance as a response to AI’s fundamental unpredictability, comparing the moment to the mid-1990s scramble to govern the early internet. Because large language models do not behave consistently, after-the-fact compliance checks break down in practice. His answer is to extend the Risk and Control Self-Assessment (RCSA) framework into a full lifecycle discipline for models and agents, with controls embedded from build through runtime. "When a model is drifting or a prompt is not producing consistent results, that is a drift issue, and it requires controls like alerting and human-in-the-loop oversight, because even today’s low-risk use cases are already drifting in production," he explained.

Obstacles in execution: But having a framework is only the starting point, because execution at enterprise scale is where most AI programs stall. Integrating fast-moving GenAI workflows into legacy development lifecycles introduces operational friction, and that challenge is compounded by a widespread skills and knowledge gap. "The difficulty of adapting existing software development lifecycles for GenAI, combined with the lack of necessary skill sets and general knowledge, is what slows adoption," Paramasamy said, adding that organizations cannot rely on hiring alone because "everyone is trying to do the same thing."

.svg)