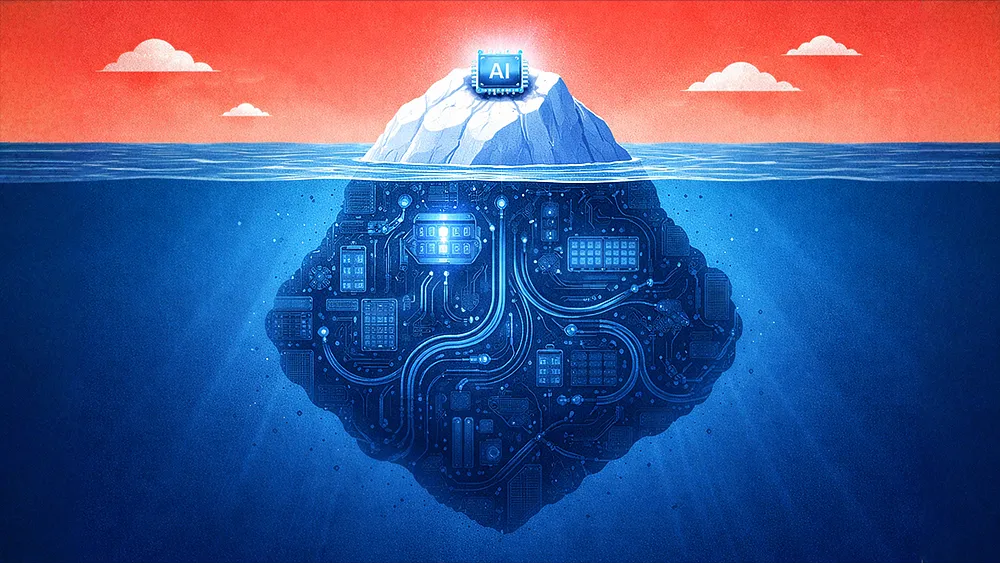

As companies adopt AI, many have taken a critical misstep: bolting AI onto surface-level workflows. The problem is that optimizing one visible process in isolation can sow chaos across the rest of the business, creating expensive messes and eroding the collective wisdom that holds an organization together. The solution lies in adopting enterprise-wide frameworks that align AI with strategy, operations, and continuous learning, ensuring innovation strengthens the system rather than destabilizing it.

Mei Lin Fung is Vice Chair of the UN AI for Good Impact Steering Committee. Her career spans from the trenches of enterprise software to the highest echelons of global policy. She has foundational experience from her time at Oracle, where she pioneered one of the first Customer Relationship Management systems, in addition to her advisory work on G7 policy briefs, and her contributions to the UN Commission on the Status of Women on digital innovation. She said that the key to avoiding this misstep is to establish frameworks that turn AI from a chaos agent into a disciplined tool that moves the enterprise toward its goals.

“If you bolt AI onto what you can see, you optimize a tiny piece of the iceberg and risk creating chaos everywhere else," said Fung. She suggested organizations replace static, linear thinking with an operating philosophy built for constant change: the OODA loop, along with an adaptation of her own How, What, Why model.

Learn or lose: Credited to Air Force pilot John Boyd, OODA—Observe, Orient, Decide, Act—offers a framework, a guiding philosophy for enterprises to adapt and innovate more effectively, something Fung said is vital. "It's a dogfight with millions of dogfighters," she said. "The only way to play is to constantly learn, innovate, forecast, and test through disciplined experiments. But you must never be tied to one person's view of the world, because that's the trap." It's this adaptability and constant learning that the OODA loop unlocks for enterprises.

.svg)