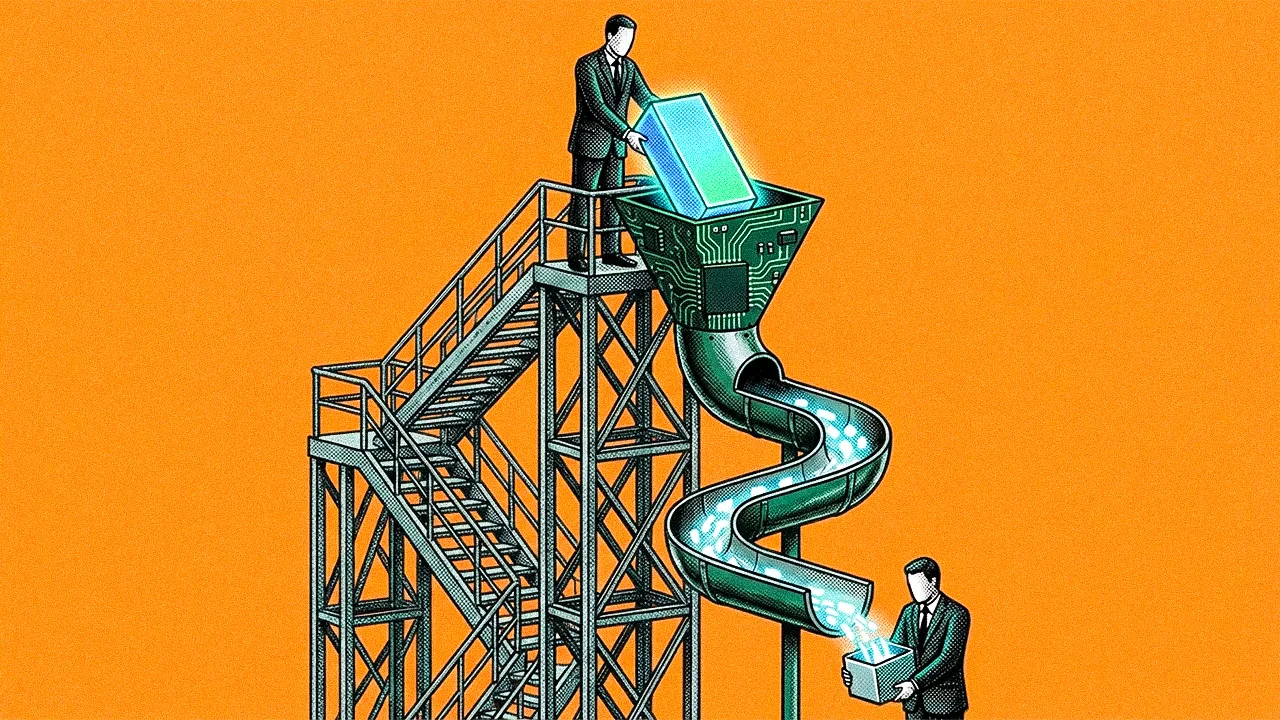

Most organizations deploying AI are asking the wrong first question. They start with which process to automate rather than whether that process should exist in its current form at all. The more consequential work is upstream: restructuring when and where decisions got made so that the complexity downstream—the rework, the exception handling, the audits after the fact—never materializes. That reframing turns AI from a productivity tool into something that changes the operational terrain itself.

Gary Meyer is a Strategic Markets Executive at TriZetto Healthcare Products, the Cognizant-owned platform that powers claims processing and payer-provider collaboration for major health plans. He has spent more than 30 years working at the intersection of healthcare technology, interoperability, and enterprise architecture, contributing to standards-based platforms that align data exchange, regulatory compliance, and decision intelligence across complex payer-provider systems.

"AI isn't just automating decisions. It's reshaping the entire decision architecture of the business," Meyer said. The shift he described is structural. When an organization introduces AI into a workflow, it changes not just that step but the logic of every step around it. And because every participant in a value chain is adopting AI simultaneously, the effects compound. A process that had made sense for twenty years might no longer be the most important thing to optimize.

Look left and right: "Before you do AI, you need to understand what others are doing with AI. Everyone upstream and downstream in your value chain is changing their workflows too," Meyer said. In healthcare, that means payers, providers, regulators, and technology partners are all reshaping their decision processes at the same time. Automating a step in isolation risks optimizing something that the rest of the chain has already moved past.

Left-shifting constraints: Much of what enterprises call a business process is actually normalized rework: governance, exception handling, and validations that exist because the right information had not been present when the original decision was made. Meyer used healthcare as an example. "If we can move some of those dependencies into decisions made earlier, closer to patient care, we create better decisions up front that require less rework later," he said. In practice, that means using AI adjacent to the provider to surface context at the threshold that matters, rather than overwhelming clinicians with information or ignoring it entirely.

The organizational implications are significant. AI forces teams to confront siloed structures that had been tolerable when each group optimized its own piece of the system. Now that data and decisions flow across the enterprise, those barriers have become the primary bottleneck. Meyer said the ability to break them down is becoming a competitive differentiator.

Cultural readiness: "When we're talking about AI, we're talking about decisions, thought, intelligence. That forces us to be deeply retrospective about decision rights, authority, and governance. These are hard conversations, and if an organization cannot have them with psychological safety, they're in trouble," Meyer said. He argued that subject matter experts who bring epistemic humility and patience will matter far more than speed alone.

No-regret investments: While the AI model layer moves fast enough that any specific tool might be obsolete in quarters, not years, Meyer sees the data layer as far more stable and worthy of sustained investment. "All data now needs to have its provenance. Where did it come from? Confidence in your outputs has to be based on that," he said. The same principle applies to enterprise data platforms where reliability and trust outlast any individual AI capability built on top. He also pushed back on the common advice to perfect data before applying AI, noting that dirty data carries signal about what's broken in the processes that produced it.

Surfacing data for AI: Traditional interfaces, UIs for humans, and fine-grained APIs for systems were been designed for AI consumption. Meyer flagged this as a practical bottleneck. "You burn through a lot of tokens when APIs aren't designed for AI. How you surface data in a way that's reusable across silos and even beyond the enterprise perimeter is increasingly important," he said. Innovations like MCP point in this direction, but the underlying design challenge of making data accessible to autonomous agents without excessive cost requires deliberate architectural choices.

The pattern Meyer returned to was that AI amplifies whatever organizational maturity already existed. Companies with thick decision context, where the right data, incentives, and accountability are present when decisions got made, will simplify workflows dramatically. Companies where silos are hardened and governance is unclear will find AI accelerates confusion just as efficiently. "The financial dynamics and the data flow dynamics are much more stable than how they've been arranged into existing workflows," Meyer said. "The workflows can be simplified with AI. But the meaning of the data, the processes, the incentives, the costs, the risks, that's what's durable."

.svg)