“Real-world integrations have got to be secure, they've got to be scalable, they have to be production-grade, there has to be guardrails."

Across industries, CIOs face a common AI dilemma: They’re under pressure to keep up with competitors, stay ahead of AI mandates, and show that early investments in the technology are paying off. But many are not yet comfortable deploying AI broadly across operations. The result? Lots of pilots, but real-world impact is elusive, underwhelming, or isolated.

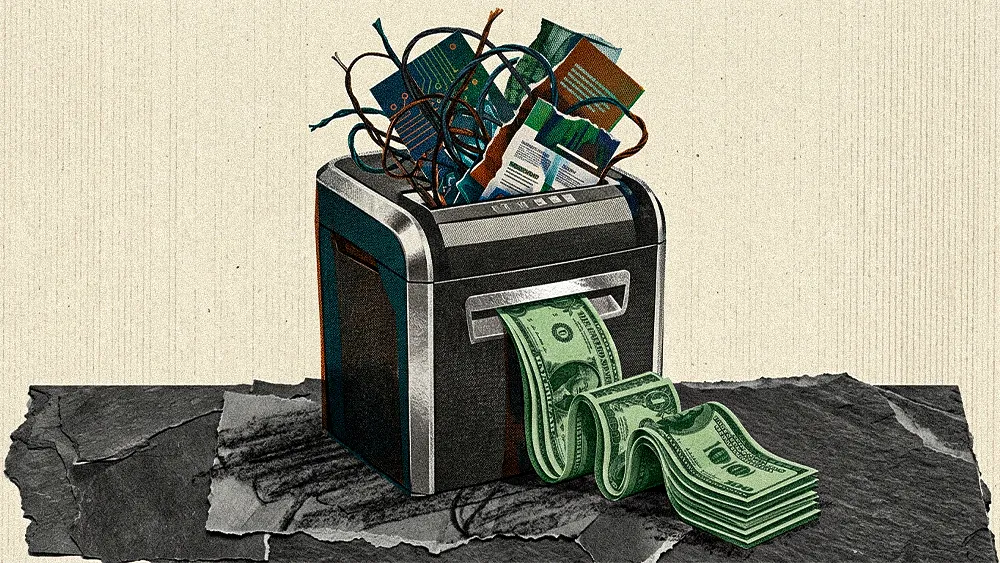

According to an industry study, 75% of CIOs regret the AI buying decisions they’ve made in the past year. And if they don’t show value soon, AI budgets are at risk. Luckily, the opportunity is waiting for CIOs willing to take on the challenge.

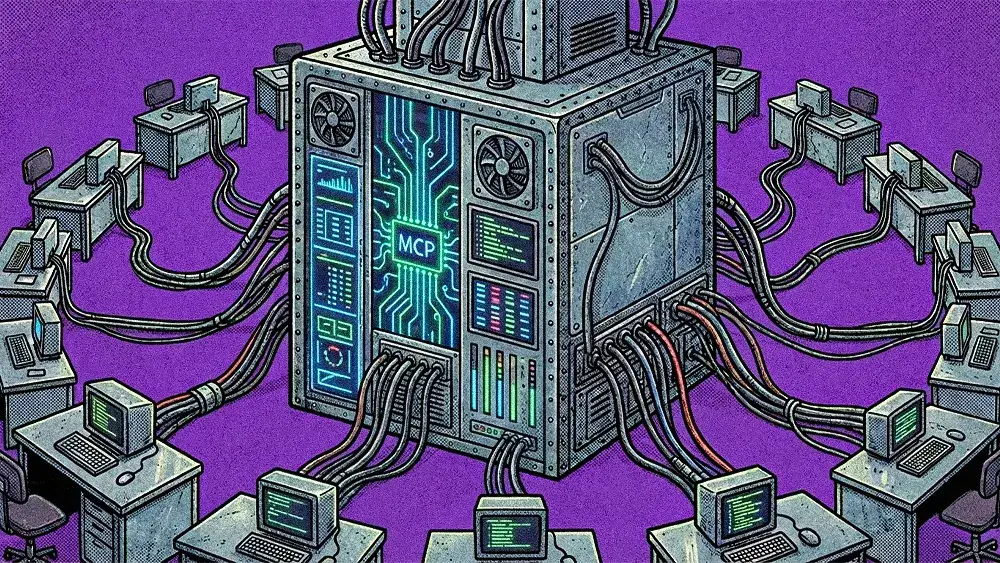

AI has advanced far beyond simple chatbots. With Model Context Protocol, or MCP, companies can connect powerful underlying LLMs to core business systems, instantly creating AI agents that can autonomously execute commands. But while they can operate independently, the question becomes: are companies ready to let them?

Today, the main hurdle is consistency. While AI agents may be technically accurate, they’re also delivering ten different responses to the same prompt. Some have even described these basic agentic AI systems as “eager interns” — excited to help, but lacking the professional knowledge needed to do the work properly. And security is a major concern. In fact, a recent study found that organizations lacked visibility into 95% of MCP deployments.

“Right now it's an unsolved problem because it's the wild, wild West,” Jon Aniano, SVP of product and CRM applications at Zendesk, told VentureBeat. “We don't even have a defined technical agent-to-agent protocol that all companies agree on. How do you balance user expectations versus what keeps your platform safe?"

Weak security, coupled with sub-par results, are eroding confidence in AI at a time when organizations are rapidly trying to expand use of the technology. In fact, by 2027, over 40% of AI investments will fail because of governance and infrastructure problems, according to Gartner.

Trust in AI is now an MCP problem

On its own, through open source libraries or lightweight frameworks, MCP is too shallow and fragile to support enterprise AI workloads. It operates like a simple API call, instead of the fortified foundation that businesses need to support their growing fleet of AI agents, limiting the end capabilities.

“Real-world integrations have got to be secure, they've got to be scalable, they have to be production-grade, there has to be guardrails." Karen Bolda, Chief Product & Technology Officer, B2B, previously told CIO News.

.svg)