The gap between deploying AI and capturing its value is now the defining challenge of the CIO role. Technology leaders are now judged on what the business gains in addition to what IT deploys. That shift has moved the CIO from systems operator to outcome orchestrator, responsible for connecting people, process, data, and cross-functional execution to measurable business performance. It is a role that places CIOs at the center of the strategic agenda for AI transformation, and one that research increasingly confirms is expanding in scope faster than most organizations have prepared for.

Jimi Li, CTO and CIO at legal media and data insights company and law.com-owner ALM, has led technology transformation across four industries, including financial services at GE Capital, global e-commerce at L'Oréal and Coach, media at News Corp, and B2B software at ALM, tying every initiative directly to P&L impact. Most recently, he led an AI transformation that contributed to a successful private equity exit, driving roughly 40% profit growth through AI-enabled margin expansion while cutting technology spending by about 30%. He said that CIOs and CTOs are uniquely positioned to lead transformation because they sit closer to the full scope of organizational change than any other executive.

"CIOs and CTOs are in a unique position where they are familiar with technology, process, and data. They are the closest expert any CEO can find within the organization," said Li. That proximity to every layer of transformation, spanning technology, process, data, and people, is what has pushed the CIO role from back-office function to strategic center. It is also what makes the gap between deploying new tools and actually capturing their value so visible to technology leaders before anyone else in the room.

Most enterprise structures were designed for a different era, and have never been updated to reflect what the business actually runs on today. Layering AI on top of them does not fix that. It just relocates the constraint. For Li, closing the gap means two things: mapping the full workflow management loop to find where value is actually being lost, and understanding what the change means for every function that has to live with it. He noted that a stakeholder-first mentality helps establish trust and cuts through the anxiety that has accompanied recent AI discussions.

Bottleneck whack-a-mole: Li has watched organizations declare productivity wins without ever asking whether the overall output actually increased. That question, he said, is the one most leaders skip. "AI coding is something everybody's talking about these days, and a lot of people are claiming that it increases developer productivity by 80%. But I'm going to ask the question: Can any organization say that they've increased their overall output by 80%? None of them can say that," Li said. "You're optimizing an individual part or individual task, but the entire workflow and decision making is not touched. You're just moving the bottleneck from one place to another. You're coding 80% faster, but the validation speed stays the same. So it's almost irrelevant if you can code faster or not."

Filtering the FOMO: Before approaching any peer, Li said his first question is always the same: what's in it for them? "If I put myself in their shoes and understand what their concern is, and combine that with the technology changes, I have to differentiate what's changing and what doesn't change," he explained. "We're dealing with a lot of noise these days. Over the past five years, there's been a lot of FOMO and anxiety, but the fundamentals really haven't changed. The way I communicate is based on those fundamental principles, not the technology."

Li translates empathy into a repeatable operating model. He said the organizing principle is simple: optimize internal loops around the paying customer. In his experience, that often means redesigning how teams work, not just the tools they use, breaking down silos, integrating functions, and building the cross-functional groundwork that makes a board conversation about AI land differently. That groundwork, Li said, is what allows CIOs to walk into the boardroom and speak the language directors actually respond to: business outcomes, not technical architectures.

Death by 260 questions: Li formalizes it into governance frameworks before any new capability is discussed, structured models that define how finance, HR, operations, sales, marketing, and IT will work together. "What boards don't appreciate is when CEOs and CIOs go to the board and pitch initiatives and investments without context. The board doesn't understand how that ties to the bottom line or how it's going to impact the business. Questions about data, guardrails, and security are not answered. It becomes very scattered," he observed. "They ask 260 different questions. It feels very random and it's hard to keep up. But once you have the framework and methodology in place, they can follow that and feel comfortable that you have a good handle on things."

EBITDA over algorithms: Li's answer to rising board expectations is a strictly financial lens. He treats technology as a business performance lever and never walks directors through technical architectures. "When I start talking about AI implementation, I tie it to EBITDA, revenue lift, and cost savings," Li said. "I don't mention those words directly, but the numbers speak for themselves. Whenever I mention those specifics, I notice that they open their eyes and start paying attention. Whenever I get derailed into more technically oriented conversations, you can tell from the room's reaction that they're not paying attention."

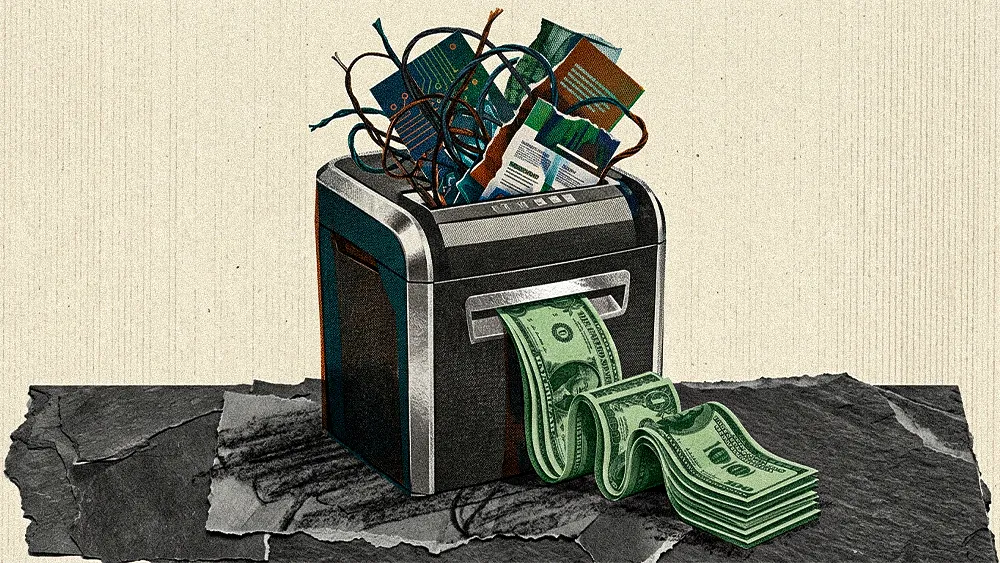

Generating new efficiencies is only half the equation. Absorbing that capacity requires rethinking how work is structured, not just how fast it moves. AI ROI does not show up in the P&L automatically. It has to be designed for, and that means confronting how roles are structured, not just how tools are deployed. It is a pressure point CIOs feel acutely, in a market where workforce constraints including skills gaps, change fatigue, and talent shortages already limit how fast AI can scale.

The capture gap: Li breaks the value capture problem into three categories. The first, and hardest, is employee value. "Number one, which is the most difficult, is employee value. If you improve the employee's productivity, it improves their satisfaction. If they used to spend five hours every day on execution work and now they spend two hours, they have three hours to spare to learn something new, to spend time with a cup of coffee or with their family. Overall they're happier," he said. "They get the same work done faster and can do something else. But the organization may not be set up to capture that value. That value stays with the employee. Whether or not the board cares about that value is a different story."

Features aren't free: The second category is more actionable but just as easy to miss. "The second category is AI adoption that improves your existing products and services. You can only realize this value when you design to capture it. If we implement AI functionality and leave it at that, you miss the value," Li said. "You have to align your sales team, sales strategy, and pricing team. Are you going to charge for it? If not, maybe you can use that as an advantage to cross-sell or upsell other services. There has to be a way to capture that value for existing products."

The third category covers new products and services with long-term strategic value, and it is where most organizations lose the thread entirely. For Li, capturing that value requires working with finance and sales to build concrete assumptions about adoption and pricing before work begins. Organizations that deploy AI without that alignment, and without preparing the workforce to absorb it, are treating transformation as an experiment. The employee capacity AI frees up does not automatically become business value. Someone has to design for it.

"A lot of people just say: 'Okay, we're trying to do this. We don't know what's going to happen. Let's hope for the best.' Those people are going to fail," Li noted. "And that's what the majority of people are talking about when they say AI doesn't deliver value. It falls into those categories because they're doing this purely as an experiment. They're not ready to capture the value."

.svg)