“Agentic AI orchestration isn’t improving or automating technology solutions, it is offering a complete re-think on the underlying solution design from the ground up providing unprecedented dynamic enterprise outcome driven agility."

In many organizations, 2025 reshaped the role of CIO.

The push to move AI from production to the real-world amped up the pressure on IT chiefs. Many had to navigate both technical and cultural hurdles to adoption. Failure was more common than success. And now, as companies continue to double-down on AI in 2026, the need for CIOs to be both a strong business and technology leader is more important than ever.

While the still nascent AI market remains unpredictable, the next 12 months are bound to yield new surprises, challenges, and opportunities for organizations to differentiate themselves. Amid the uncertainty, CIOs are focusing on the basics: governing data, giving it context, and delivering the information to AI systems in a fast, secure manner.

"There's going to be more and more research and more and more optimization … in how to allow models to be able to reason and ingest much larger context," Goldman Sachs CIO Marco Argenti told Fox Business. AI agents are “going to have more and more capabilities to really be able to give applications access to intelligence and access to tools,” he added.

This foundation is how companies expand the reach of AI agents, and move beyond isolated deployments to use cases that span departments and underlying IT systems. And ultimately, it's how CIOs deliver value from AI — not abstract returns, but KPI-driven outcomes that help the business move faster, smarter, and more efficiently.

But to truly become an AI-driven organization, CIOs have to solve a significant hurdle: a lack of trust in AI. Just 6% of companies trust AI agents to work autonomously, according to research from Harvard Business Review, AWS, and Workato. Without addressing this chasm, organizations will fail to realize the full benefits of their AI transformation.

Here are the data and AI truths guiding CIOs in 2026.

- An AI-ready data strategy is a non-negotiable

Many AI investments fail because agents are forced to navigate fragmented data environments. In fact, just 26% of companies believe their data is good enough to fuel AI-powered workflows, according to IBM research.

Without unified, trusted data assets, businesses can’t confidently deploy and scale the technology in the real-world. They risk running afoul of privacy regulations, introducing new security vulnerabilities, and delivering inaccurate results to users that can actually harm operations. As a result, AI projects struggle to move beyond the experimentation phase.

While some vendors tighten their grasp over customer data, companies should embrace AI agent development platforms that enable users to access their full data estate, spanning all asset types. Unfettered, secure access to proprietary data is how organizations build unique AI that gives them an edge over rivals.

“We have one of the largest and most complete data sets in the industry. We have decades of field testing data on our products, both the ones that made it to market, and the ones that were in the pipeline…and failed, as well as the genetic information about those products, so that we can start to explore and understand the relationship between what genetic combinations are most successful in what environments,” Bayer Chief Information Officer Amanda McClerren told AgFunderNews.

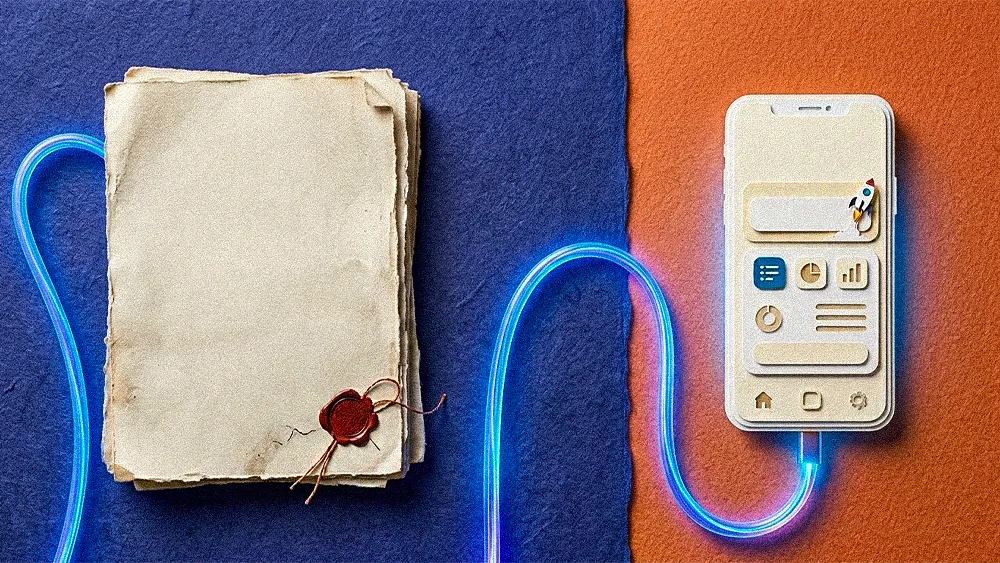

- Orchestration is essential in the move to AI-powered workflows

Today, many AI agents are operating in tightly-controlled environments and assist with task-level work. In 2026, companies will increasingly give these systems more autonomy and greater access to their IT environment. AI agents will begin to handle whole workflows. They’ll interact with other AI systems, creating multi-step agentic processes. And properly orchestrating these connections will become a barometer for success in AI.

“Agentic AI orchestration isn’t improving or automating technology solutions, it is offering a complete re-think on the underlying solution design from the ground up providing unprecedented dynamic enterprise outcome driven agility,” Canadian Tire Corporation Chief Information and Technology Officer Rex Lee wrote. “This enables agents to operate autonomously, learn continuously, and be swapped or upgraded modularly, while maintaining interoperability.”

.svg)