"What are you role modeling today? What is your best sales or customer success person exemplifying? If AI is watching just them, what would AI think best in class looks like?"

AI can’t be what AI can’t see. I coined that phrase thinking about ethics and values. It can sound emotionally appealing but abstract. I didn’t expect it to become the most practical advice I can give to CIOs.

What I mean is, the example you set as a human is now your most consequential AI strategy. AI is a giant mirror and we want it to amplify the best of humanity.

We’re not just designing AI for our organizations anymore. Our organizations are designing AI through every behavior, decision, and shortcut our people take. Human role models are upstream from AI in every use case and application. Role models exemplify the patterns we want baked into large language models.

Most organizations have some degree of dysfunction baked in. A 2023 Harris Poll Toxic Bosses Survey found that 41% of U.S. employees have sought therapy to cope with toxic bosses. And the picture is getting worse. Gallup’s 2025 State of the Global Workplace report shows global employee engagement has dropped to 21%, down from 23% just two years earlier. The decline was driven primarily by managers. In the past, the consequence of a bad boss or a disengaged employee was relatively contained. AI changes the scale at which those patterns operate. The dysfunction in your organization used to be a management problem. It is now a compounding AI problem, because your agents learn from and replicate the behavior of your people.

What are you role modeling today? What is your best sales or customer success person exemplifying? If AI is watching just them, what would AI think best in class looks like?

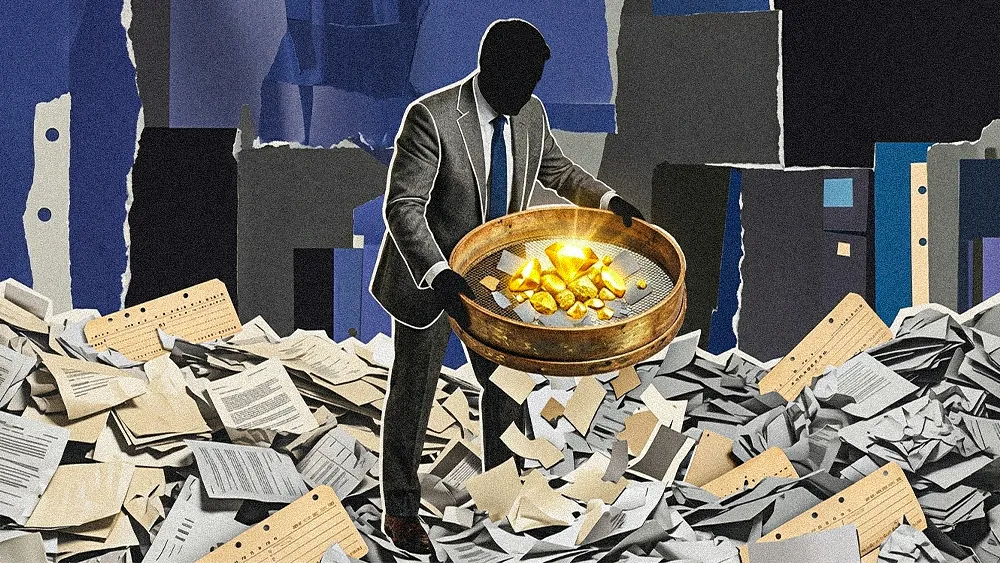

When you deploy AI agents across your internal operations, those agents learn from and duplicate what they see. Not only will these agents learn from the training artifacts your people produce every day (call transcripts, CRM notes, ticket histories, email threads) but they will also learn from patterns and behaviors as well. These agents will pick up on how your people handle ambiguity, whether they take ownership or deflect when things go sideways, and how they communicate under pressure. And as these agents move from assisting your people to handling tasks autonomously, the patterns they absorb become the default. As autonomy increases, fewer routine interactions will receive direct human review.

This becomes even more important as organizations adopt protocols like MCP that give AI models direct, standardized access to enterprise systems like your CRM, ticketing platform, and your knowledge base. The best implementations build governance and guardrails into those connections, ensuring agents only operate within defined workflows. But even with strong governance, the quality of what agents produce still depends on the human inputs they’re drawing from. Human role models provide the guardrails and controls AI agents need. When you build AI quality assurance agents, they need role modelship practices as their foundational pattern.

The flip side is just as true. When your people operate with clarity, take ownership, and communicate well, AI scales those patterns just as fast. An organization with strong human foundations will see AI compound its strengths across every connected system. That’s the opportunity most CIOs are underestimating.

Wire 2 Model is how I describe what needs to happen. It means to wire humans and AI to be role models. When you make principled, unbiased, transparent decisions, you influence the kind of behavior AI systems are trained to recognize, replicate, and reward. We need to wire humans, organizations, and AI products to model behaviors worth replicating and deliver economic value in the process. When I say “model” here, I’m talking about the human kind.

Managers direct reports. Leaders inspire followers. Role models engineer identity and magnify the future.

CIOs have historically kept culture and AI in separate mental buckets. One belongs to HR and the other belongs to engineering. But the culture your leadership team builds now shows up directly in the data your agents learn from.

Culture = Role models * Habits

AI Transformation = Culture * Technology.

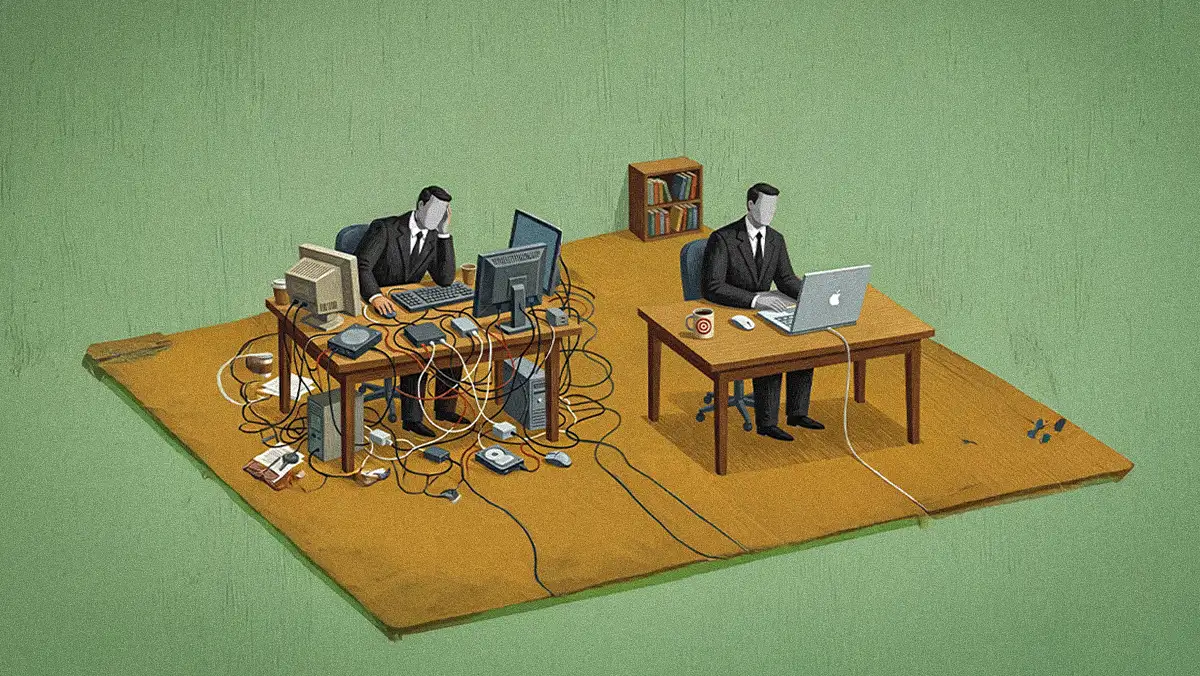

CIOs have always talked about people, process, and technology. The difference now is that AI collapses the distance between them. Your people’s behavior becomes your process which in turn becomes your technology’s training data. They’re no longer three separate investments.

That changes what talent development means for a CIO. Growing your people directly shapes the inputs your AI systems draw from. Investing in your people with the same rigor you invest in your stack produces better AI outcomes because the raw material gets better. We must be even more intentional about how our people document, communicate, make decisions, and handle ambiguity. This is part of our AI strategy. In practice, that means stewardship, people owning their decisions and results before directing others; mentorship that builds genuine curiosity; leadership that models transparency under pressure. These used to be considered soft skills but now they are behaviors your AI systems are learning from every day.

Role Modelship™ is no longer a soft skill. It is a hard input to AI data and algorithms.

Role Modelship is going to separate CIOs over the next few years in ways that aren’t obvious yet. You will see more focus on how to show up as the best practice example, not just how to lead. You will see more role modelship demo days encoded in AI. The technology layer is converging. Most organizations will end up with similar tools as AI continues to spread and become mainstream, but your agents will only be as good as the human role models they learn and unlearn from.

.svg)