While most enterprises are using AI for simple tasks, the real operational question is when they attempt to apply this technology around systems that involve goal-setting, weighing multiple paths, and choosing courses of action. As these tools begin to take actions autonomously, executing workflows, making decisions, and touching cross-functional systems, leaders are now asking where to draw the line on autonomy and where humans need to retain control.

Vijay Morampudi, SVP and AI CoE Leader at Marsh, has spent the last year focused on building and scaling agentic workflows in regulated environments. With more than two decades of experience in business and technology transformation, he has led teams that have increased enterprise agent success rates from around 30 to 70 percent, relying heavily on governance and architecture to keep AI initiatives aligned with business goals and risk thresholds. And he's clear on the role of translating human knowledge into those AI agents.

"The biggest gap isn't the model. It's the 'how work gets done' knowledge that lives in people's heads. Until you codify that, your agents will fail critical edge cases," he said. That knowledge gap, encompassing the judgment calls, trade-offs, and contextual decisions that experienced operators apply instinctively but rarely document, is what separates enterprises automating simple predetermined steps from those building agents that can navigate complex, goal-oriented workflows, according to Morampudi. In his experience, the organizations seeing the strongest results are the ones that invested in codifying how decisions actually get made before giving agents the autonomy to act on them.

Before deploying agents, Morampudi often pointed teams back to a more fundamental question: where do agents actually belong? He mapped agentic workflows to organizational goals that involve competing constraints, pointing to inventory management as a useful example. In that scenario, a sales forecasting agent can collaborate with a sourcing agent to constantly balance the risk of out-of-stock events against the cost of holding excess inventory. Because these agents must act as digital colleagues, teams can treat them as colleagues, and create an onboarding plan that builds a validation layer to ensure the workflow aligns with top-line goals.

The deterministic trap: For Morampudi, an agentic approach reserves agents primarily for multi-step, goal-oriented problems and leaves deterministic tasks to traditional rule-based systems. In his view, agentic AI does not replace deterministic systems, but sits alongside them, augmenting areas where rules break down while workflows and guardrails constrain behavior. "If your tasks are deterministic and predetermined, don't bother with agents," said Morampudi. "You are unnecessarily complicating the process. A simple rule-based system will work better. Agents work best for a scenario where you need to achieve a goal."

Once agents are deployed against the right kinds of goals, they still need deep context to handle edge cases. Agents do not fail because of poor logic alone. They fail when context is incomplete, fragmented, or forgotten. Enterprise knowledge is fragmented, spread across systems, documents, and people, and much of it is inconsistent, incomplete, or not structured for machine use. The real insights live in the tacit knowledge of experienced staff. To address the gap, his team actively deconstructs human decision-making in areas such as risk consulting. They use voice capture to interview experts, analyze past reports, and identify which variables, such as regulatory requirements or industry best practices, actually drove a recommendation.

Picking their brains: Using a human-centric workforce literacy approach turns unwritten know-how into structured inputs for agents. "We try to reverse engineer how a specific recommendation was provided. We build agents that go back and ask the experts how a decision was made, and we capture that information through voice," Morampudi said.

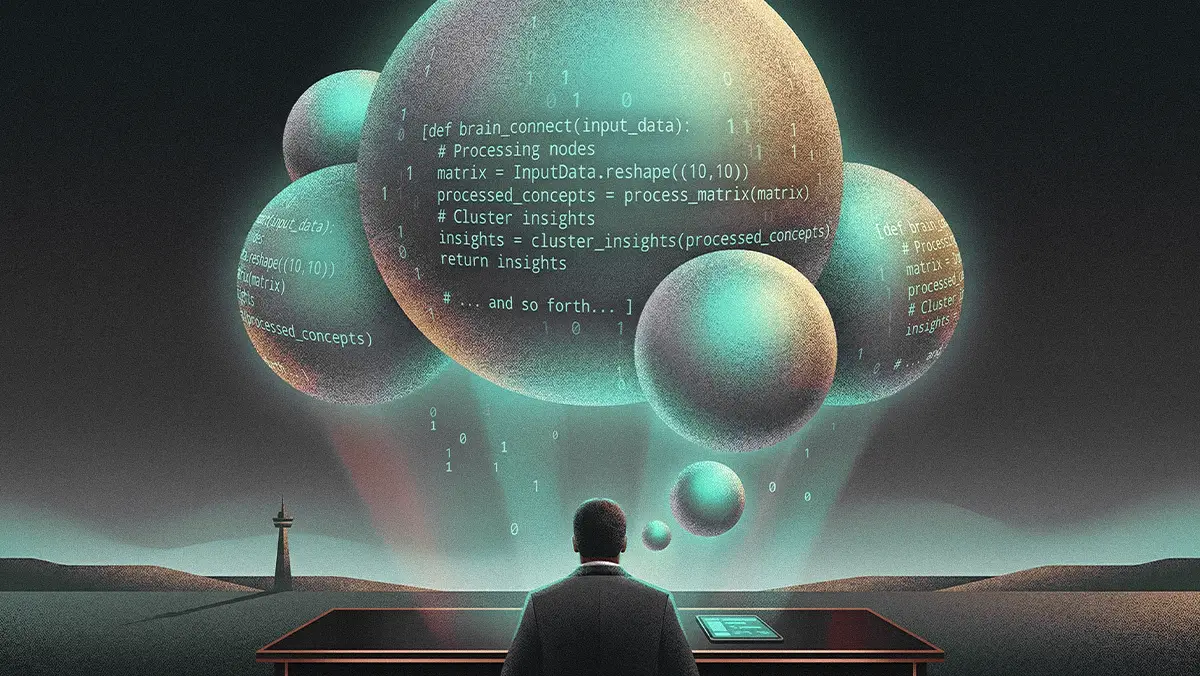

You can't just feed that extracted knowledge into an agent live in production, however. That kind of teaching requires a deliberate, offline approach from a smart architecture standpoint to properly store and retrieve information. Morampudi described this in terms of a layered decision architecture: a goal layer defining what the system optimizes for, a decision layer where codified human judgment is structured and refined, and an execution layer where actions are taken and autonomy is constrained. Within that execution layer, he breaks storage down into a memory base with three distinct layers: semantic memory (facts such as regulations, standards, and best practices), historical memory (past interactions for a given scenario), and experiential memory (what worked and what did not in similar situations). In his architecture, when human experts review an agent's output, their feedback is evaluated and routed into the offline memory base. Equally important, outdated patterns must be discarded as environments shift, preventing the accumulation of stale decision logic that could introduce new risk. The agent then leverages these continuous improvement loops to access updated experiential memory the next time it encounters a comparable edge case.

Looping humans in: Using a mix of architecture and human feedback has led Morampudi's team to increase their enterprise agent success rates from an initial 30–40 percent up to about 70 percent. "The feedback provided by the human expert is captured, so that it goes directly into memory. When the agent performs a task, it understands that it encountered a similar scenario in the past, and recalls the feedback it was given," explained Morampudi. For him, human involvement is not a binary checkpoint but a spectrum, ranging from approval gates on irreversible actions to continuous collaboration that refines the system's decision logic over time.

As agents become more capable of handling edge cases, leaders have to decide where to draw the line on autonomy. For Morampudi, a useful rule of thumb is "irreversibility." He advocated rule-based validation in high-stakes scenarios, noting that actions that are irreversible, high-impact, unauditable, or lack clear accountability should trigger human review before execution. A large-scale vendor payment, for example, usually warrants explicit human approval to prevent irreversible actions, and verify that appropriate security controls and clear agent risk and accountability are in place. The same logic applies when agents begin accessing sensitive data to communicate with the outside world.

No take-backs: Morampudi framed the autonomy question not in terms of what the system is capable of, but whether the decision itself warrants autonomy. In his view, autonomy is not a system-wide setting but a decision applied at the level of each individual action. "For tasks that we cannot reverse, we want a human involved. If an agent is communicating with the external world using internal sensitive data, we ideally would not allow it to perform autonomously," he noted.

But even with a perfect memory architecture, there is a catch. The financial impact of these systems depends less on the models and more on the humans sitting next to them. In this model, many employees find their roles moving from executing tasks to reviewing agent output, effectively becoming the trainers who power the offline feedback loops. Morampudi noted that productivity gains don't automatically translate into higher profit margins; to see a financial return, companies usually have to reconfigure work and redesign human roles. Saving an employee four hours a week doesn't actually help your bottom line unless you redesign their role to fill that time with higher-value work. Without that redesign, efficiency gains become latent capacity rather than economic impact.

The real heavy lifting: Morampudi put a precise number on what most organizations underestimate. "Change management is 50 to 70 percent of your whole effort. Or, put another way, change management requires three times as much effort as the technology effort. So don't underestimate it," he said.

Without visibility into how agent decisions are made, why they are made, and how they are executed, enterprise trust breaks down. The governance and evaluation frameworks emerging around agentic AI reflect the same underlying principle: that the hard work is organizational, not algorithmic. Implementing robust agent control planes and layered governance blueprints is only as effective as the institutional knowledge and change discipline behind them. Morampudi's broader point is that the enterprises seeing real returns from agents are the ones that started with that discipline. Amplifying human expertise through agents, rather than simply replacing human steps, ultimately distinguishes deployments that improve margins from those that only improve slide decks. "Start from the business," Morampudi said. "See what things you can do differently using agents that will generate value for your organization."

.svg)