"The context layer is how human stewardship scales."

In my last column, I argued that your humans are your AI strategy. This one is about stewardship at the context layer.

As an advisor, I speak with CIOs every day who are deploying AI agents into what amounts to decision factories, making thousands of choices per minute on data that they don’t fully govern. Trust is already low (Gartner reports only 41% of employees fully trust senior leaders, and Edelman reports 68% think leaders mislead). Now we’re asking AI to inherit that deficit and operate at machine speed?

The instinct is to fix this with better models. But, AI can’t be what it can’t see. And what it sees right now, in most organizations, is compounded dysfunction.

In modern AI systems, what the model sees is not fixed. It’s determined at runtime by the context it’s given: the data it can retrieve, the tools it can call, the history it carries forward, and what it’s allowed to access. As agent-based systems mature, this is becoming a real layer, not just prompt construction but a more deliberate way of assembling context.

Here’s what that means practically. Every workaround your team uses, every time someone routes around a broken process, every meeting where the real decision happens in the hallway and the AI summary captures the sanitized version, that’s the data. A 2024 UCL study found AI doesn’t just learn human biases. It learns from patterns, and context determines which ones it sees, trusts, and repeats.

I’ve started to view this issue as a role-modeling problem at scale.

You must embed Role Modelship across six layers of your AI architecture:

- the context layer that stewards what AI sees;

- the training data and labeling decisions that shape what it learns;

- the system prompts that encode your values at inference time;

- the human feedback loops that reinforce what it rewards;

- the governance boards that determine what it permits;

- and the daily human-AI interactions that set the habits it inherits.

Stewardship is a foundational part of Role Modelship. Every other discipline builds on it and most organizations tell new leaders to start here. It means owning your character and results, giving back, and always paying it forward. I’d venture that stewardship scales rather naturally in teams of, say, ten. It does not scale to a fleet of agents unless you deliberately construct it.

The context layer is how human stewardship scales.

The context layer determines what the system sees, what it trusts, and what it carries forward. At its best, it encodes judgment about what to trust, what to include, and how to interpret it. Stewardship is about governing what someone entrusts to you for the long term. A context layer is data stewardship made operational through how data gets selected, ranked, filtered, and made available at runtime.

Stewardship needs clear owners, accountability, and ongoing care. It needs metadata lineage and enrichment, along with rules for access, ranking, and inclusion. A context layer keeps those acts of stewardship from being one-off heroics. It codifies the steward’s judgment so that others can apply it consistently each time they use AI.

This is where the context layer turns stewardship into guardrails.

Governed context lets a CIO rely on data rather than debate it. Without it, every AI conversation becomes a debate about whose numbers are right. But with it, the answer is already certified, and the conversation can move on to what to do about it.

But context governance alone is not enough. CIOs need to add culture readiness alongside data readiness. Three questions belong in every AI readiness assessment.

- What behaviors does your performance management system reward?

- How do your leaders respond publicly when AI surfaces an uncomfortable truth?

- Who has the psychological safety to flag a problem before it becomes a model problem?

Organizations with leadership-driven AI adoption are 1.4x more likely to have employees who believe in senior management’s vision. That clarity is a system input.

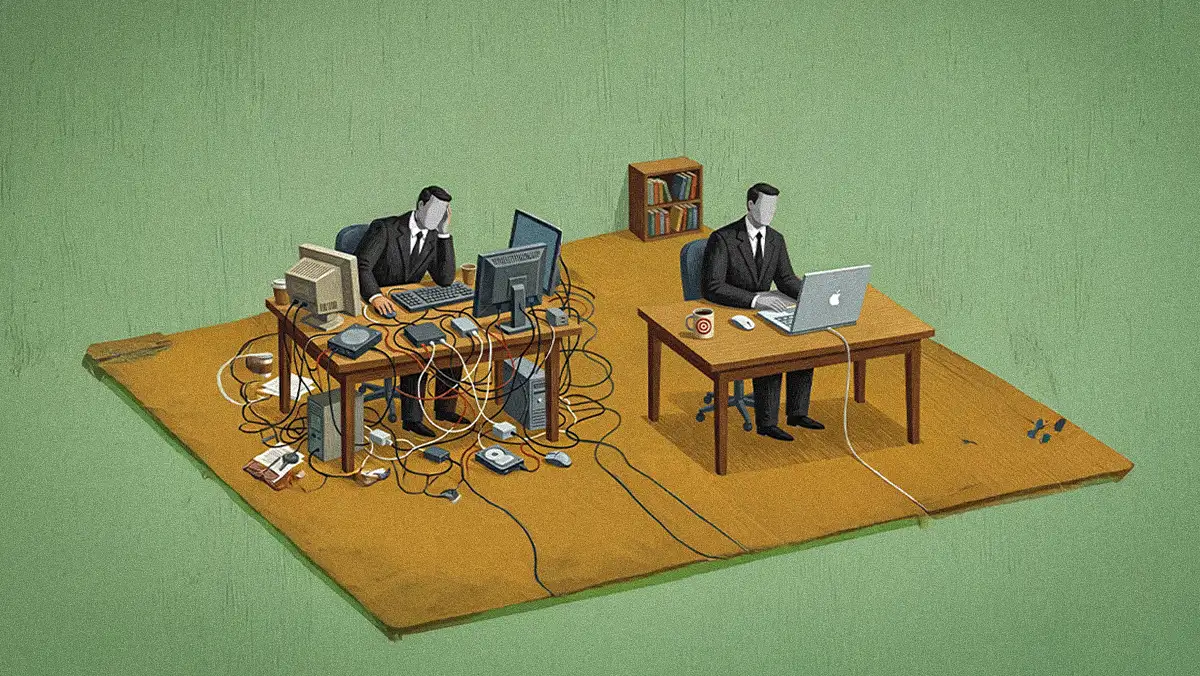

What I see across companies is this: CIOs fix the data pipeline and assume the values problem is solved. It isn't. When 31% of workers admit to actively undermining AI efforts by inputting poor data or slow-rolling adoption, that resistance becomes training data too. The dysfunction gets baked in.

Where CIOs underestimate it most is in middle management. C-suite behavior gets scrutinized. What the middle layer does daily, how they assign work, who they credit, how they respond to failure, that's the signal AI learns from. It's invisible to most governance frameworks.

This is why Role Modelship belongs in the context layer of the AI stack as a systems input, not just a soft skill. Role Modelship is the integration of stewardship, fellowship, mentorship, leadership, and sponsorship into a single practice. Most AI roadmaps include one of these at best, usually leadership, and call it governance. That is not enough. Every decision, every story told, every person credited or not credited, that behavior is now training data. Human Role Modelship is a strategic advantage.

As we set strategic goals to embed AI into products and use it to increase human productivity, we also need to set strategic objectives for Role Modelship and the development of model humans. Stewardship has to precede automation. Our human lives are now training data for AI.

AI can’t be what AI can’t see. Let’s steward the patterns it sees.

Eli Potter is a Silicon Valley technology executive and advisor to more than 150 companies on human values and converting technology into economic value. She is the author of Role Modelship: Multiply Your Impact to Influence AI.

.svg)