Software release governance is changing from a reactive operational checkpoint into a predictive layer of financial risk intelligence. AI is putting that shift into practice, redefining how companies handle compliance and risk. The stakes vary sharply across networking environments. A hospital demands five-nines (99.999%) stability, while an industrial sensor network on a factory floor runs on different tolerances entirely. Both are forcing organizations to rethink how they build and maintain trust.

Paddu Melanahalli is Senior Director of Engineering at Cisco. With over three decades of software engineering experience, Melanahalli's career is defined by scale and impact. He drove $4B revenue-generating releases for millions of network devices, secured a US Patent, and earned recognition as a three-time recipient of the prestigious Cisco Pioneer and Cisco Pinnacle awards. He argued that old governance models are no longer equipped for the scale and financial risk of modern enterprise software.

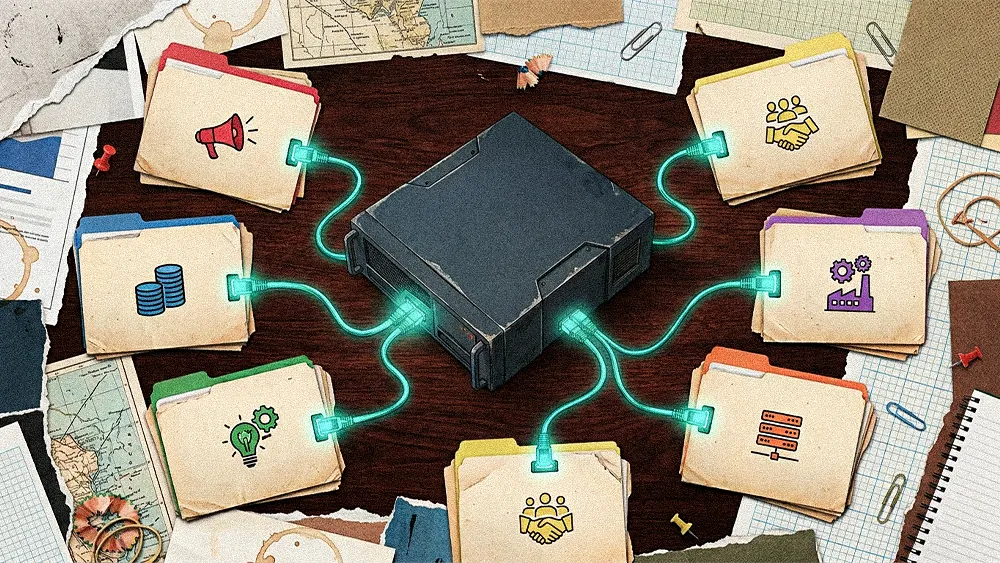

"Governance can’t just be engineered hygiene anymore. It has to operate like financial risk management, because stability, trust, and upgrade confidence directly impact our customers’ revenue models," said Melanahalli. At Cisco, that shift is not theoretical. Melanahalli's team manages a software portfolio worth billions in quarterly revenue, spanning enterprise switching, routing, SD-WAN, wireless, and industrial IoT, all running on a single IOS codebase across roughly 70 hardware platforms. The governance model holding that together has had to evolve.

Driving this change is an erosion of trust, born from repeated failures that create expensive friction. This "operational noise" buries risk and can make organizations slow to adopt new technology. In an environment managing 2,000 routers and 2 million switches, an upgrade that breaks a retail franchise's custom tooling or removes a key capability without warning is a major failure.

The upgrade gamble: "The biggest worry for every customer was the fundamental question of whether they could upgrade without knowing if it would work well. This was a problem because after an upgrade, their configuration sets sometimes wouldn't come back correctly and nothing would work," said Melanahalli. The result was a costly workaround: customers built their own lab environments to qualify software before touching production.

The trust tax: "This lack of trust created a massive delay. Even when we provided a quality software load, customers would take three to nine months to qualify it in their own labs before upgrading. That is not a win-win situation for anyone," said Melanahalli. That lengthy lag represents real cost, absorbed on both sides of the relationship.

.svg)