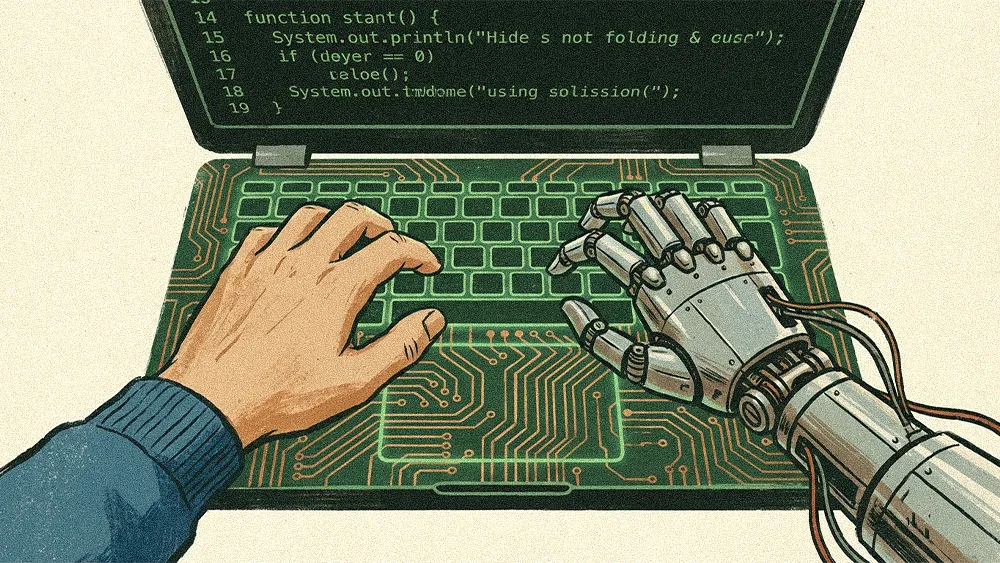

AI-assisted development is not just accelerating how code gets written. It is restructuring how enterprise engineering teams operate, from how pods are organized to how performance is measured to who is accountable when things break. For CIOs still optimizing for speed-to-deploy, the shift demands a broader lens. The real question is how engineering teams structure context, oversight, and accountability in an AI-driven development cycle.

Ravi Evani is GVP, CTO, and Regional Engineering Lead for North America at Publicis Sapient, a technology company within Publicis Groupe. With more than two decades of experience building consumer-facing experiences and mission-critical distributed systems, Evani now leads a global engineering organization of people and AI agents. That vantage point gives him a direct view into how AI is reshaping the structural DNA of enterprise software teams.

"AI is no longer a tool. It's a first-class citizen in the development process, connecting context across design, engineering, and product management." The transformation Evani described goes well beyond coding copilots. Organizations getting ahead are connecting AI context across every part of the software development lifecycle: meetings, architectural guidelines, production logs, customer feedback, and company strategy. The result is a fundamental shift in how teams collaborate and execute.

Shared context, not siloed tools: "If you and I are engineers, it's not that we are working on our own agentic coding tools. Both of us have to be on the same tool which shares this entire context," Evani said. "Everything is connected and you have to bring all of that context together."

Pods of one: Engineering pods that once held seven or eight people are being compressed to one to three. The most effective configuration is a single engineer who understands the full picture: design, product, and business domain. "The best pods are pods of one," Evani said. "Engineers who were already full-stack are now even more fearless with AI. They're going into design, they're going into product management."

The premium on talent: That compression puts an enormous premium on polyglot engineers who can hold the full picture in their heads. "The premium of really strong engineers has gone up 50x now. In this new world, they can replace a lot more given this whole back and forth."

.svg)