A fundamental misunderstanding about AI is leading many organizations astray. Most assume risk lives in the system itself: the large language model, the algorithm, the little black box. But the truth is far more nuanced. Despite popular belief, the real measure of AI risk is defined by the technology's application more than the actual tool itself. Now, an LLM considered low-risk in one context can become a catastrophic liability in another. To navigate this new reality, some experts say leaders need a fresh model for governance, one that shifts focus from the tool to the task.

We spoke with Arvinda Rao, the Director of Compliance for AI Governance, Responsible AI, Security, Privacy and Risk at Securiti. With over 16 years of experience in risk and compliance at firms like IBM and Accenture, Rao has been on the front lines of solving this enterprise challenge for some time. Today, he's the pioneer behind the Data Command Center, a centralized platform enabling the safe use of data and GenAI. According to Rao, the only way to manage AI effectively is by dismantling the one-size-fits-all approach and rebuilding governance from the ground up, centered around a single, powerful use case.

"Use-case-driven implementation is essential for success with AI," Rao explained. "The underlying technology might stay the same, but each application introduces a different risk. Now, use-case-based risk management is critical as a result. The notion that risk is defined by context rather than code is key to unlocking secure innovation," Rao said, "especially when the same AI model deployed across different business functions carries radically different threats."

Same model, different dangers: As an example, Rao considers HR. "When you're using an AI system to screen candidates," he explained, "the regulatory risk is higher. Denying someone an employment opportunity or unfairly judging them based on their resume is risky. Use that same AI system to generate a report, and you'll get a different type of risk. Or, in customer support, if AI hallucinates and gives you some bad advice, that creates another risk."

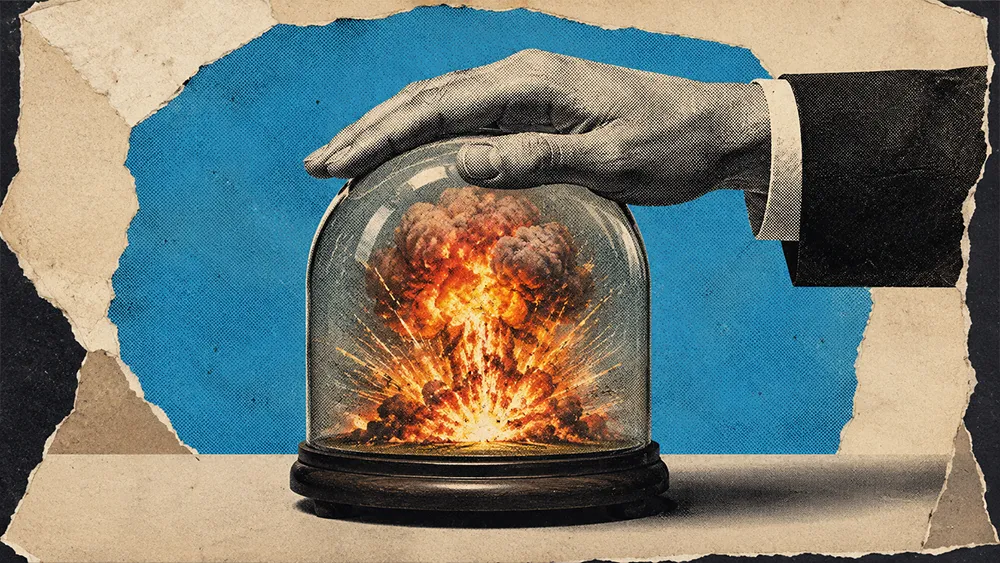

Unfortunately, this conundrum exposes a common yet critical error in corporate strategy. Instead of starting from scratch, many organizations opt to layer AI governance onto their existing frameworks. From Rao's perspective, this approach is destined to fail. Ignoring the unique, contextual nature of AI risk, he warned, can create a false sense of security.

The GRC trap: "Companies are adding AI governance on top of their existing GRC," Rao noted. "But very few recognize the need for a separate function that's focused entirely on governing AI. Most see it as one more layer when, in reality, it needs a dedicated team."

.svg)