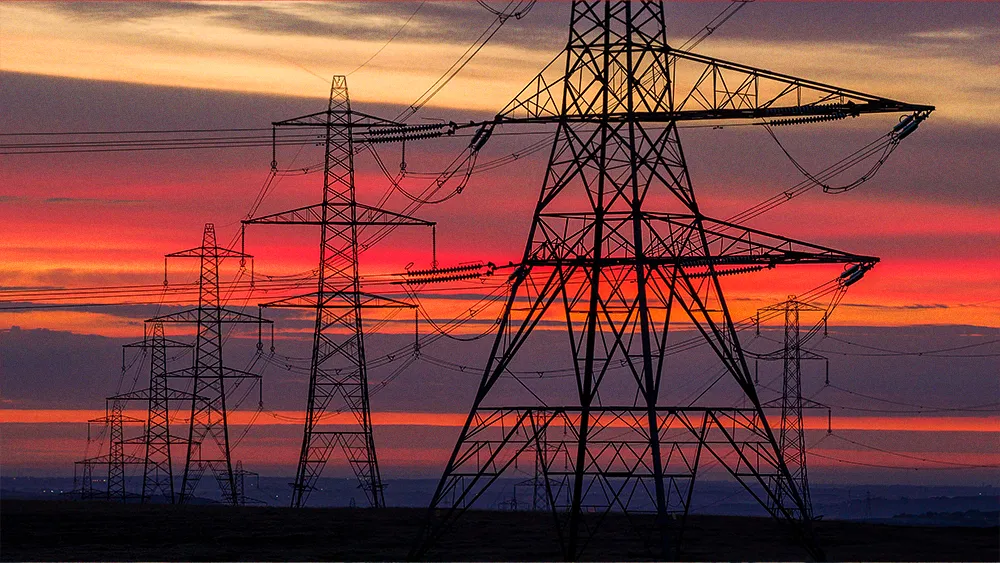

Artificial intelligence is running up against the physical limits of energy infrastructure. Hyperscalers have doubled capital plans to build AI capacity, but they are straining a North American power grid engineered for one percent annual load growth and decade-long planning cycles. The result is a defining bottleneck, a collision between digital ambition and analog reality that is reshaping enterprise AI strategy, national competitiveness, and economic development.

Theodore Paradise, Chief Policy and Grid Strategy Officer at CTC Global, spent 15 years with ISO New England, the region's independent grid operator, before moving into transmission strategy and regulatory advocacy across the private sector. That institutional grounding shapes how he reads the current moment. He said the industry is facing a fundamental reordering, one that forces a return to first principles.

"AI is compute, and compute is energy. For all the grand ambitions and notions, you need energy,” Paradise said. “There’s no version where we didn’t get the energy but we did the AI anyway. That’s just not how it works. We've seen from consumer electronics up to the data center scale that efficiency presses forward, and some of the gains are tremendous, but those are easily outstripped by the compute demands." This reframes a debate that has focused almost entirely on chips, models, and compute, redirecting it to the physical infrastructure technology depends on.

At the heart of the challenge are the mismatched timelines of digital speed and physical reality. The current grid, with many wires dating back to a "mid-1900s vintage," was built for a slow, predictable world. AI demand is anything but. This creates a difficult equation where nearly half of projects already face delays.

The physics of the problem: "You can build a new, very large data center in eighteen months and they're expecting power to be there. But the new transmission needed to interconnect that new power is on a seven- to ten-year-or-longer time frame, a timeline constrained by the physics of infrastructure," Paradise told CIO News. The mismatch is creating a disconnect between what corporations know internally and what they project publicly. While many executive teams understand the challenge, their announcements about multi-gigawatt data center plans can create a misleading perception that power and infrastructure are already secured, leaving core business risk underweighted in enterprise AI planning.

Highway to hardware: This pressure is prompting a radical rethinking of how infrastructure gets built. Where tech giants once signed Power Purchase Agreements to secure their energy source, the focus has shifted to securing delivery. Google's partnership with CTC Global is one example, funding advanced conductors that can double grid capacity in months instead of a decade. "The time needed to get upgrades on the existing grid is pushing large users of power to another set of off-grid resources," Paradise said. "Think of it as we have a highway system, but now we're going to build a whole parallel highway system just for the sports cars. The moment we're in has some of that." But building private infrastructure shifts the financial burden onto remaining ratepayers, impacting everything from manufacturing to consumer electric bills.

.svg)