For many legacy organizations, integrating artificial intelligence is not simply a matter of new technology but of organizational readiness. Rather than solving existing problems, AI often exposes them, bringing long-standing issues like disconnected systems, competing priorities, and unclear data definitions to the surface. The real test lies not in deploying AI itself but in creating the alignment needed to make it work.

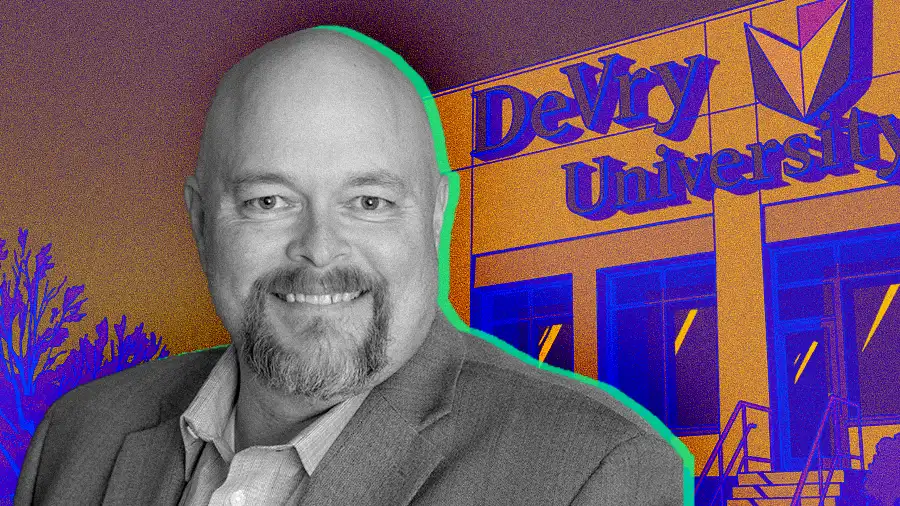

Chris Campbell, Chief Information Officer at DeVry University, believes the path to effective AI adoption begins with internal clarity and discipline. With more than two decades of experience leading digital transformation, Campbell has served as an advisory board member for strategic groups such as Evanta, a Gartner Company, and as Senior Director at Adtalem Global Education. He brings a clear perspective on what it takes to turn technological potential into measurable impact, and in his view, an organization must first get its own house in order before it can get AI right.

"If your own core dataset is confused, how can we hope for something like AI to interpret it appropriately? The truth is that every team sees data through its own lens. The definition of a customer may mean one thing to marketing and something entirely different to customer success. Until an organization agrees on what its data actually means, AI will only amplify the confusion that already exists," Campbell said. Campbell's core argument is that inconsistent data definitions and competing interpretations across departments undermine the ability of intelligent systems to deliver reliable value.

To combat this, Campbell championed the creation of DeVry's AI Lab, a governance body designed to elevate AI to a C-suite-level strategic priority. Leaders proposing an initiative must formally present how it will work, its ROI, and its potential gaps. Because the executive team approves projects as a unit, the result is that every initiative is cross-functionally aligned and tied to a clear business outcome.

Steering in the right direction: "We use a steering committee comprised of most of our executive team to ensure every initiative is aligned with our current strategies. It's a model we've used for a long time, and it works particularly well for governing our AI Lab," Campbell explained.

The power to say no: For the governance model to have teeth, it can't just be a rubber stamp. According to Campbell, it also has to serve as a quality control mechanism. "We were working with a partner on a self-service chatbot for employees, and it became obvious their team didn't have the necessary skills, even if the technology was okay. That's the kind of engagement we will terminate."

Fluency over features: "We're not shopping for AI; we're looking for AI fluency. We need tools that can think in the language of our enterprise, not just predict within it. I refuse to adjust our university's mission or KPIs to align with a tool based on a vendor's assumption that their AI is automatically the right way to do things." It's a reminder that true innovation is not about adopting the newest technology but choosing tools that understand and strengthen the organization’s own purpose.

.svg)