Enterprise AI is hitting a brick wall, and the obstacle is not the model or the data. It is the foundational work most organizations skip before either enters the picture. Companies rush to deployment carrying fragmented datasets, legacy infrastructure, and integrations that were never stress-tested under real production conditions. The result: only 6% of organizations see significant bottom-line impact from AI. The differentiator is not the sophistication of the tools. It is whether the organization was genuinely ready to use them.

Ramachandran Sasisekaran is a board member at Gridzy and a fractional CTO/CIO at The Fractional Executive Network, India. Over a 28-year career building and scaling Global Capability Centers for Unilever, Tesco, Microsoft, and News Corp, he developed deep expertise in enterprise transformation, including a $22 million cloud consolidation at News Corp. He has observed that the leaders who succeed with AI are not the fastest movers, they are the ones who take accountability for organizational readiness before the first vendor meeting.

"Many organizations are not ready for AI. It is important to do a discovery stage to understand how your datasets are, how your integrations are, and where the duplications sit," said Sasisekaran. The gap becomes visible at the worst moment: when vendor demos that performed flawlessly on sample data meet real production systems, compliance requirements, and enterprise integrations for the first time.

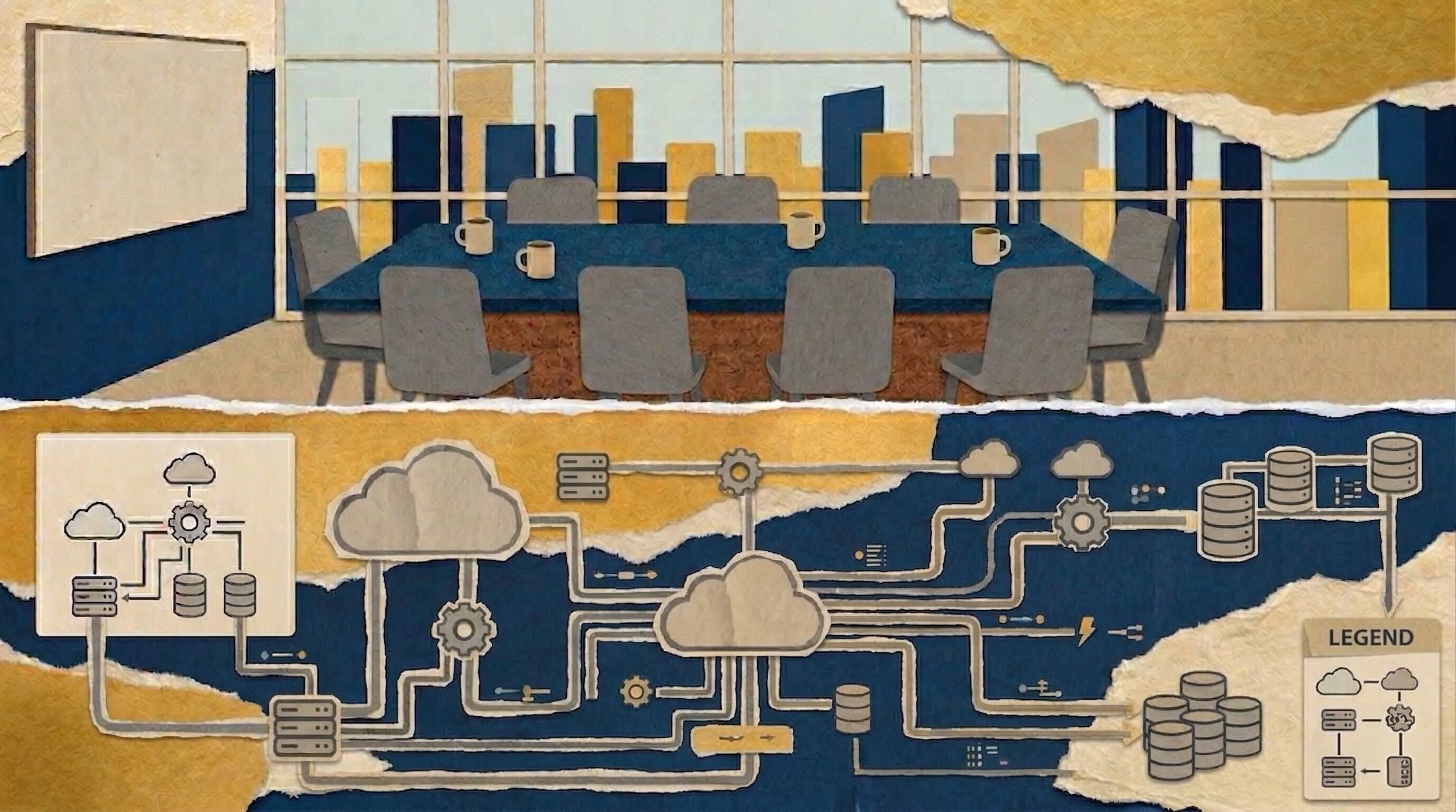

Most organizations skip straight from roadmap to rollout without stopping to audit what they are actually building on. Before looking at vendors, Sasisekaran recommends running an internal discovery stage. That means tracking down where data lives, how systems integrate, and where duplicate tools have crept into the stack. Consolidating overlapping systems and standardizing datasets, perhaps by building on a unified PostgreSQL-based data and AI foundation, closes the infrastructure gaps that surface as failures later.

Smoke and mirrors: "Whenever vendors come in to demonstrate their AI capabilities, we showcase only the non-essential data due to confidentiality," said Sasisekaran. When real enterprise data enters the picture, bringing with it real world banking systems, compliance mandates, production risk, and live system integrations, those flawless proofs-of-concept often tell a different story.

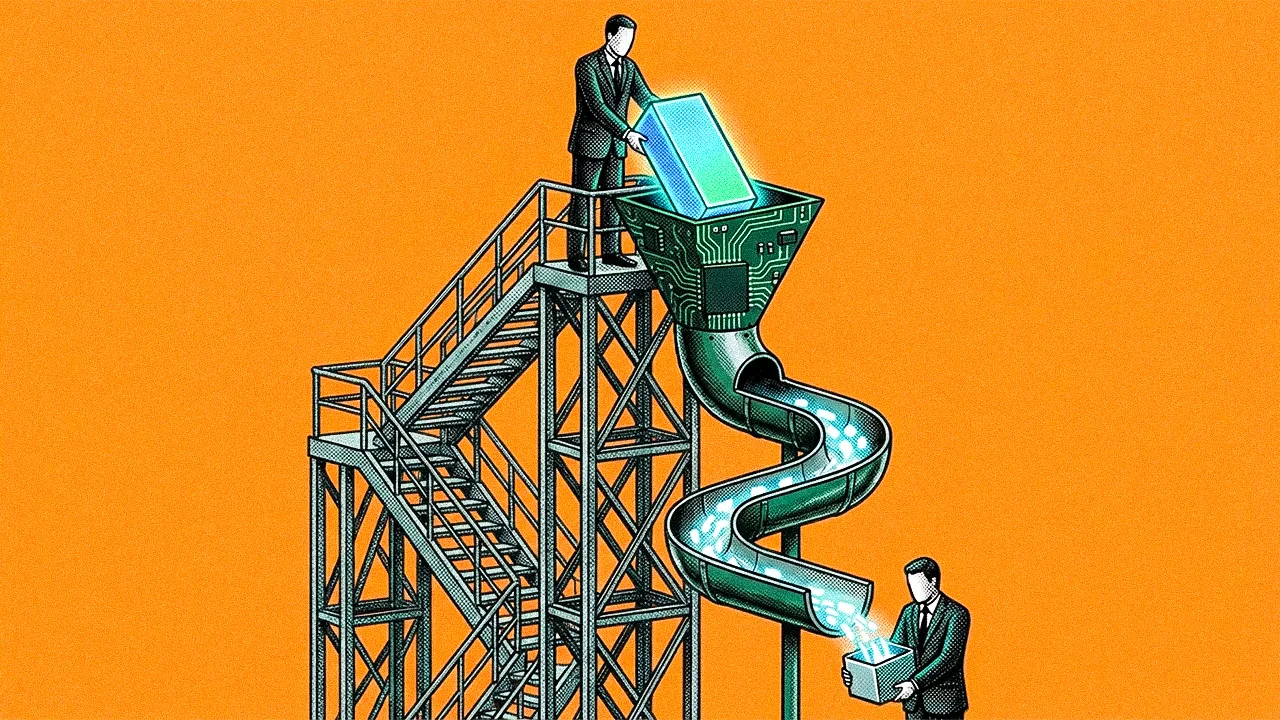

The fail fast fallacy: "There are instances where entire datasets vanished because the cloud implementation was done in such a way that no data was available, meaning you need to start from scratch," he cautioned. The "fail fast" instinct that works in controlled pilots becomes a liability when enterprise data is on the line. A failed rollout is not a learning moment. It is a recovery problem. Building for production-grade deployment from day one, rather than retrofitting after failure, is what separates pilots that scale from ones that stall.

The technical architecture is only half the problem. A Marshall Goldsmith-trained leadership coach, Sasisekaran views psychological safety and communication as operational prerequisites for data integrity. Displacement anxiety does not announce itself. It shows up as quiet resistance, incomplete adoption, and metrics that look fine until they don't. Clear feedback loops and a credible case for what employees gain from the change are not morale initiatives. They are data quality controls.

The missing manual: "When projects are underway, people leave a gap in the user and training manuals that need to be created," he noted. When that documentation gap appears, it is rarely a resourcing problem. It is a signal that the team was never fully brought into the change.

Accidental adversaries: "If I don't realize that I have to interact with these systems first and foremost, I am going to be an adversary of that change because I won't accept it," he explained. For Sasisekaran, the change management plan is not a soft complement to the technical rollout. It is a prerequisite of equal weight.

When those prerequisites are missing, organizations rarely lack confidence at launch. What they lack are the warning signals that would have tempered it. Organizational pressure to declare victory is one of the most reliable predictors of AI failure. Executives want speed, teams want credit, and the metrics closest to hand tend to confirm what everyone wants to hear. Sasisekaran has seen this produce two distinct failure modes: programs that collapse in support and maintenance after an early thumbs-up, and deployments that look flawless on internal dashboards while external users quietly hit dead ends. The organizations that avoid both govern before they build (clear objectives, phased budgets, defined checkpoints) and build internal teams capable of challenging vendor recommendations rather than ratifying them. They also keep channels open where concerns can surface without career risk, because the problems that appear late were almost always visible earlier to someone who didn't feel safe saying so.

Popping champagne early: "Because the CIO or CDIO wants things to be faster, everyone shows their victory thumbs up," he said. When pressure to deliver compresses timelines, teams declare wins before the work has been stress-tested. Once support and maintenance inherit the system, performance that launched in the mid-90s can bleed into single digits. "The same CIO or CDIO who claimed victory will eventually come back realizing their job is at stake," he added. "Calm down, don't call out victory early."

Perfect on paper: Rather than starting with AI features, leaders should return to the fundamentals that governed investment decisions before this wave: finance, talent, capabilities, and business need. "Internally it is a success: 100% availability, all the forms are working, every integration is amazing," he said. "Externally, users often find they have to navigate through ten different places. The automation is not helping, and where human intervention is needed, they cannot get it," he added. Before any deployment is approved, leaders need to be ready for friction and willing to own the shocks that follow. These are not outlier concerns. They are the questions C-suites across industries are grappling with, heading into 2026.

There is one more cost that rarely appears on the dashboard. As organizations automate the tasks that once trained junior staff, they are quietly eliminating the pipeline that produces the senior expertise they will need to sustain those same AI programs. The talent question Sasisekaran returns to most urgently is not whether AI will replace people, but whether organizations will invest in the people AI needs to work alongside. Automating entry-level roles eliminates the experiential pipeline that produces senior leaders. The expertise organizations will need in five years is built in the roles they're cutting today. Sasisekaran's prescription is direct: keep hiring at entry level, treat the organization itself as a training institution, and invest in enabling experienced staff rather than replacing them. "If you are saving a penny today, you are going to pay big dollars tomorrow," said Sasisekaran. "AI is not a replacement for talent," he added. "As leaders, we need to work on enabling our teams to adopt AI as an element. Repurposing your resources is critical."

Every major technology transition, from mainframes to mobile, has eventually been absorbed. The difference this time is the speed. "The only thing is the speed at which AI is going is not at the same speed which the earlier transition happened," he said. "This is going a little faster now. We need to educate people also at the same speed."

.svg)