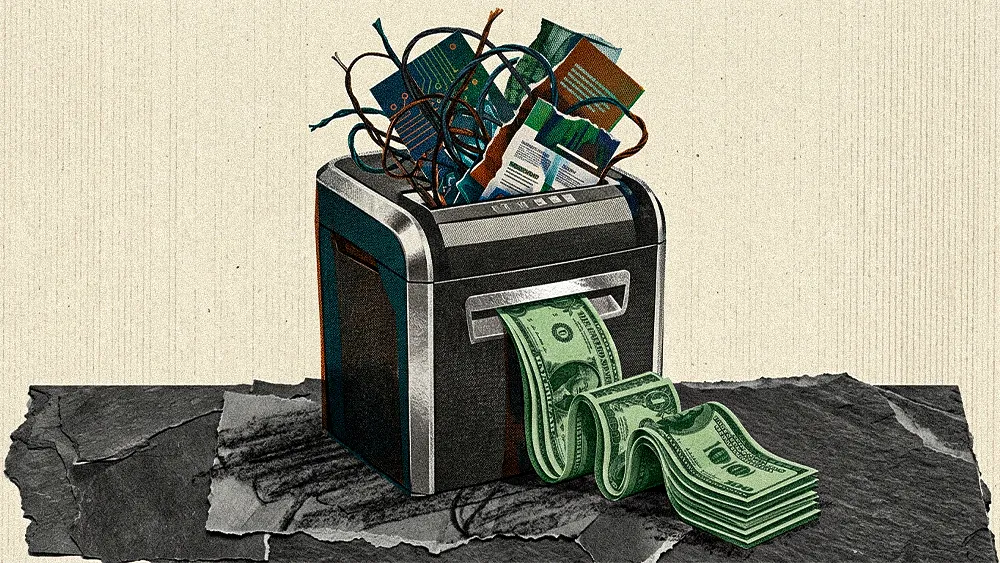

The AI industry is no longer obsessed with whose model is cleverer. The real contest is unfolding in concrete, steel, and megawatts. Anthropic’s plan to pour tens of billions into its own data centers shows how the center of gravity has shifted toward companies that control every layer of their stack. The next wave of AI leaders will be the ones willing to build the foundation they plan to stand on.

Doneyli De Jesus* is an AI Architect and Strategist with over 20 years of experience translating C-suite goals into technical reality. Currently a Solutions Architect at ClickHouse and with a track record of architecting AI solutions at giants like Snowflake and Elastic, De Jesus has a frontline view of this infrastructure landscape. For him, Anthropic's move wasn't just smart. It was inevitable.

"If you want to optimize models effectively, you need to own the stack from silicon to software. That’s what Anthropic is doing now," said De Jesus. According to him, Anthropic's strategy follows a path already forged by rivals. OpenAI paved the way with its deep partnership with Microsoft, later expanding to include Oracle for more capacity. Meanwhile, Google has leveraged its own massive, long-standing infrastructure to push the boundaries of what's possible.

But why now? De Jesus explained that the timing is driven by two distinct forces: one technical, the other financial.

Durable by design: With model breakthroughs slowing, the edge moves to hardware optimization, a long term effort that only becomes possible once the business has a stable foundation. "Anthropic is at a stage where they have a stable base of enterprise customers and a healthy book of business committed for years into the future," De Jesus said. "Now they can go out and make those investments without being at risk of overleveraging on debt."

Small wins, big lift: De Jesus said Anthropic’s focus on enterprise customers has created a durable revenue base that makes this kind of expansion possible. He notes that they now stand out as the only major model maker running across all three major cloud platforms: AWS, Google, and Microsoft. "Because they own the stack end-to-end, they can find those marginal efficiencies. They train these models to be optimized for their own infrastructure, and they know the infrastructure so well that they can find these benefits."

.svg)